MM4U - A framework for creating personalized multimedia content

Susanne Boll

University of Oldenburg, Computer Science Department

Multimedia and Internet Technologies Group, D-26121 Oldenburg, Germany

E-mail:

Susanne.Boll@informatik.uni-oldenburg.de

Abstract

Multimedia content today can be considered as the com-position of media elements into an interactive multimedia presentation. Personalization of such multimedia content means that the content reflects the users’s context, their spe-cific background and interest as well as the heterogeneous infrastructure to which the content is delivered and in which it is presented. To achieve personalized multimedia content a manual creation of many different documents for all the different user contexts, however, is not feasible let alone economical. Rather a dynamic process of selecting and assembling personalized multimedia content depending on the user context seems reasonable. Support for such a dy-namic creation of personalized multimedia presentations, however, is lacking today. Existing multimedia authoring tools do not support dynamic content creation. Systems that already dynamically assemble personalized content, typi-cally found in the Web, remain text-focused. First research approaches in the field of dynamic generation of

person-alized multimedia content are limited with regard to their

expressiveness of the personalization task and the user fea-tures they can personalize to. Whenever the content person-alization task is more complex, these systems need to em-ploy additional programming. Consequently, with MM4U

(short for ”multimedia for you”), we propose a generic and modular framework to support multimedia content person-alization applications in this multimedia software develop-ment process. The framework’s components provide generic components for typical tasks of the multimedia content per-sonalization. In turn the development of multimedia appli-cations becomes easier and much more efficient for different users with their different contexts. First sample applications we develop on the basis of our framework are a personal-ized multimedia picture gallery and a personalpersonal-ized sight-seeing tour.

Keywords: multimedia content personalization, user

context driven multimedia authoring, software framework for personalized multimedia applications

1. Introduction

Personalization is the shift from ”one size fits all” to a very individual and personal, i.e., ”one to one” treatment of customers. Though we are still at a hype, there is a clear awareness for personalized content and products, technolo-gies are evolving and developing. In this field, the develop-ment of support for creating personalized multimedia con-tent pertains to this effort.

In this paper, we are concerned with providing a soft-ware framework to support dynamic creation of personal-ized multimedia content. Figure 1 illustrates the general process of dynamically generating a personalized multime-dia presentation. Input to this process are memultime-dia data, the associated meta data as well as user profile information. On a request, a ”personalization engine” exploits the user pro-file information and meta data to select the most suitable media data. The selected media elements composed in time and space into a coherent, personalized multimedia presen-tation. As indicated in Figure 1 result can be, e.g., a person-alized multimedia lesson in biology about the drosophila in an HTML format, a personalized sightseeing tour of the city of Oldenburg as a SMIL presentation, or a quick sightsee-ing info on a mobile phone about the Secession buildsightsee-ing in

Vienna1.

Even though we find appreciated solutions in what one could call ”contributing” technologies for the intended dy-namic multimedia content personalization, i.e., collecting and representing user profile information, modeling meta data, storing and retrieving media data, etc., solutions for the actual ”personalization engine box” as shown in Fig-ure 1 are still lacking. The subsequent section shows in more detail where we have reached to far in research and industry with regard to the multimedia content personaliza-tion effort, where the problems and the challenges are, and

how the proposedMM4Uframework can provide a

substan-tial progress in the field of personalized multimedia

appli-cations. WithMM4Uone simplifies and cheapens the

de-1Pictures and content gratefully taken from www.oldenburg.de, www.uni-tuebingen.de, and www.nokia.de

User Profile Context dependent selection of content Media data Context dependent multimedia composition Personlization Engine Meta data

The Horst- Janssen- Museum in Oldenburg shows Life and Work Horst Janssen in a comprehensive permanent Horst- Janssen Museum

t The Horst- Janssen- Museum in Oldenburg

shows Life and Work Horst Janssen in a comprehensive permanent

Figure 1. Multimedia Content Personalization

velopment of end-user tools for any personalized multime-dia experience like a personal picture gallery, personalized sightseeing guides, or personalized e-learning applications. The remainder of this paper is structured as follows: Sec-tion 2 reviews the related approaches in the field, shows their limitations, and motivates the development of our

framework. The MM4U framework itself is presented in

Section 3. An overview of the implementation and our sam-ple applications is given in Section 4, before we conclude the paper in Section 5.

2. The limitations of existing approaches

Multimedia authoring tools like Macromedia Director [12] let the authors create very sophisticated multimedia presentations in a proprietary format. For this, the authors need to have high expertise in using the tool and though do create multimedia presentations only that are targeted at a specific user or user group. Everything ”personalizable” would have to be costly programmed within the tool’s pro-gramming language. Declarative descriptions of multime-dia documents employ a declarative ”language” to describe the temporal, spatial, and interactive features of a

multime-dia presentation. Addressing the aspect of personalization in multimedia documents, the declarative, XML-based ap-proach SMIL [2] allows for the specification of adaptive multimedia presentations by the specification of so called ”presentation alternatives”. The manual authoring of such documents that are adaptable to many different contexts is too complex; also the existing authoring tools for this are still tedious to handle. Consequently, we have been work-ing on the approach in which a multimedia document is au-thored for one context and is then ”automatically” enriched by the different presentation alternatives needed for the ex-pected user contexts in which the document is to be viewed [4]. However, this approach is reasonable only for a limited number of presentation alternatives and limited presentation complexity in general.

Approaches that already dynamically create personalized content are typically found on the Web, e.g., Amazon.com [1] and with adaptive hypermedia systems [8] [7]. How-ever, they remain text-centric are not occupied with the complex composition of media data in time and space into real multimedia presentations. On the pathway to user-centered, on-demand generation of personalized multimedia content, we primarily find research approaches that address the personalization of single media like the semi-automatic home-video editor Hitchcock [11], or the personalized al-bum MyPhotos [13].

In the area of dynamically generating (personalized)

multimedia presentations there exist only a few research

approaches: The Cuypers system [14] employs constraints for the description of the intended multimedia programming and logic programming for the generation of a multimedia document [10]. A generic architecture for the automated construction of multimedia presentations based on transfor-mation sheets and constraints has been presented by [9]. These research solutions typically use a declarative descrip-tion like style sheets, rules, constraints, configuradescrip-tion files and the like to express the dynamic, personalized multime-dia content creation. However, they can solve only those presentation generation problems that can be covered by such a declarative approach; whenever a complex and appli-cation-specific personalization generation task is required, the systems find their limit and need additional program-ming to solve the problem. Also, the approaches we find usually rely on fixed data models for describing user pro-files, structural presentation constraints, technical infras-tructure, rhetorical structure etc. and use these data mod-els as an input to their personalization engine. The latter evaluates the input data, retrieves the most suitable content, and tries to most intelligently compose the media into a co-herent aesthetic multimedia presentation. A change of the input data models as well as an adaptation of the presen-tation generator to more complex presenpresen-tation generation tasks is difficult if not unfeasible. Additionally, for these

approaches the border between the declarative descriptions for describing content personalization constraints and the additional programming needed is not clear and differs from solution to solution.

Our implication here is that for the generation of com-plex personalized multimedia content, programming is un-avoidable anyway. Consequently, we propose the software

frameworkMM4Uthat relieves the developers of

personal-ized multimedia applications from the central content per-sonalization tasks and let them concentrate on their domain-specific content creation job. A framework which provides the necessary interfaces to develop personalized applica-tions but at the same time a ”home” for existing, special-ized (research) approaches which can be embedded in the framework.

3. The Multimedia Personalization

Frame-work — MM4U

This section presentsMM4U, a generic software

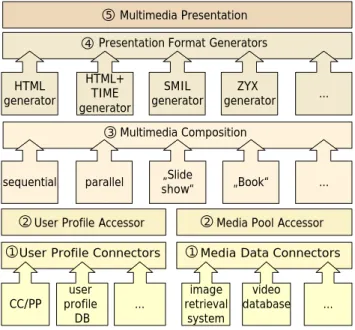

frame-work for the dynamic generation of personalized multime-dia presentations. The framework approach in general has proven to be very successful in areas in which specific solu-tions are needed and in which general basic patterns can be reused, e.g., the Java Media Framework and the Java SWING library in the field of multimedia applications and graphical user interfaces, respectively. Figure 2 illustrates the architecture of our framework. It is divided in several layers which provide modular support for the different tasks of the personalization process as illustrated in Figure 1. The framework is designed to be extensible such by embedding additional modules via well-defined interfaces as indicated by the empty boxes with dots.

In the following, we describe the framework’s features along the different layers (indicated by the circled numbers in the framework) of the architecture. We begin from the bottom of the architecture which integrates the user profile, media and metadata information (1 and 2), then present the support for personalized multimedia composition (3), and finally the creation and rendering of the personalized con-tent (4 and 5):

1. Connectors: The components that bring user profile data and media data into the framework are the User Profile Connectors and the Media Data Connectors. The tasks of the connectors is to integrate existing systems for user profile stores, media storage, and re-trieval solutions. As there are many different systems and formats available for user profile information, the User Profile Connectors abstract from the actual ac-cess to and retrieval of user profile information and provides suitable interfaces to the profile information. With this component, the different formats and

struc-Media Data Connectors Multimedia Composition

User Profile Accessor User Profile Connectors

Multimedia Presentation CC/PP profileuser DB image retrieval system video database … …

Media Pool Accessor Presentation Format Generators

HTML

generator generatorSMIL

HTML+ TIME generator

ZYX

generator …

sequential parallel show“„Slide „Book“ …

1 1

2 2

3 4

5

Figure 2. Overview Multimedia Personaliza-tion Framework

tures of user profile models can be made accessible via a unified interface. On the same level, the Me-dia Data Connector abstracts from the access to meMe-dia elements in different media storage and retrieval solu-tions that are available today. The different systems for storage and content-based retrieval of media data are interfaced by this component.

2. Accessors: On top of the Connector components, the Accessor components, i.e., the User Profile Accessor and the Media Pool Accessor, provide the internal data model of the user profiles and media data information within the system. Via these components the user pro-file information and media data needed for the desired content personalization is accessible and processable for the application.

The Connectors and Accessors are designed such that the framework’s components are not re-inventing existing sys-tems for user modeling or multimedia content management. The rather provide a seamless integration of the systems by distinct interfaces and comprehensive data models.

3. Multimedia Composition: The Multimedia Composi-tion component resides on top of the User Profile com-ponent and Media Pool comcom-ponent and employs this information for the composition task. This compo-nent comprises abstract operators in compliance with the composition capabilities of multimedia composi-tion models like SMIL [15], Madeus [16], or ZYX

[12]. With these operators, an application can carry out the actual composition of the personalized multime-dia presentation. The Multimemultime-dia Composition com-ponent is developed as such that it enables to seam-lessly plug-in additional, possibly more complex or ap-plication specific composition modules. This allows the most complex personalized multimedia composi-tion task to be ”just” plugged into the system an be used by a multimedia application.

4. Presentation Format Generators: The composition re-sults in an internal representation (data model) of the multimedia content. For its presentation either a pre-sentation component works on this internal model or based on another (XML-based) multimedia document format. The presentation format generators are mod-ules that undertake this conversion from the internal composition into the presentation format like SMIL or HTML+TIME.

5. Multimedia Presentation: The Multimedia Presenta-tion component on top of the framework realizes the interface for applications to actually play the presen-tation of different multimedia presenpresen-tation formats. Within this component one can realize an own presen-tation module that directly presents the internal rep-resentation of the composition. But one can also in-tegrate existing presentation components of the com-mon multimedia presentation formats like SMIL or HTML+TIME which the corresponding underlying Presentation Format Generator produces.

Besides this central components that support the actual personalized multimedia composition process, we are de-veloping a set of ”utility” components that arise in the con-text of such applications.

4. Implementation and Prototypical

Applica-tions

Implementation of the Framework. We have been de-signing the first version of the framework based on an ex-tensive study of related work in user profiles, media and meta data modeling as well as composition features of mul-timedia document models [5]. We also derived application requirements to the framework from first prototypes of per-sonalized multimedia applications we developed in differ-ent fields such as a personalized sightseeing tour through Vienna [3], a personalized mobile paper chase game [6], and a personalized multimedia music newsletter.

The framework, its components, classes and interfaces, are specified using the Unified Modeling Language. We have been implementing the framework in Java. The design and the development process for the framework is carried

out as an iterative software development process. Conse-quently, we implemented a first prototype of the framework which undergoes constant review and re-design phases. This redesign is triggered by the actual experience of im-plementing the framework but also by employing the frame-work in several application scenarios.

Sample Applications. Currently, we are implementing different application scenarios to prove the applicability of the framework in different application domains. They are the first stress test for the framework. At the same time the development of the sample applications gives us an impor-tant feedback about the comprehensiveness and the applica-bility of the framework.

A personalized sightseeing tour creates a multimedia presentation depending on the specific sightseeing prefer-ences of the user and her presentation device. On the basis of video streams and associated meta data, the sightseeing tour is automatically generated on the basis of region of the city the visitors wants to see and the type of sightseeing spots she is interested in like baroque buildings and juve-nile style paintings. The output is generated as a SMIL file and presented in a SMIL presentation environment on a PC or a PDA.

The personalized media gallery aims a solving the prob-lem of accessing the huge amount of personal media data at home. Instead of manually organizing the content into fixed albums, a user only needs to annotate the media data. Ex-ploiting the framework the personalized media gallery se-lects the most suitable pictures for the request and gener-ates the personalized multimedia presentation. The applica-tion creates, on demand, multimedia presentaapplica-tions accord-ing in HTML+TIME or SMIL for the next reunion from the personal media data like ”me and my summer holidays in Italy”, ”a short story of my life in pictures and videos”, or ”my sweet kids”.

Smart Authoring. Besides the applications as sketched above that generate personalized content based on the framework, we currently design a ”smart authoring tool”, i.e., a multimedia authoring tool for personalized multime-dia content using the framework. Such a tool can be seen as a specialized application employing the framework to cre-ate personalized multimedia content. This authoring tool will provide support for a semi-automatic multimedia com-position in cases in which an automated creation of multi-media content is not desired, e.g., a very specific content domain in which an expert is still wanted for the decision which content to compose. For example, in the e-learning context, one can imagine an author that designs a multime-dia course in carmultime-diac surgery and is supported in the differ-ent authoring steps for generating a personalized course for a targeted audience. For this, the Multimedia Composition

component can be extended by application specific modules for the composition to achieve a document structure that is suitable just for that content domain and the targeted audi-ence. The Accessor components support the authoring tool in those parts in which it should let the author choose from only those media elements that are suitable for the intended user contexts and that can be adapted to the user’s infras-tructure. Using the presentation format generator the au-thoring tool, finally generates the presentations for the dif-ferent system infrastructures of the targeted users. Thus the authoring process is guided and specialized with regard to selecting and composing personalized multimedia content. For the development of this authoring tool, the framework fulfils the same function in the process of creating person-alized multimedia content in a multimedia application as described in the applications scenarios above. However, the creation of personalized content is not achieved at once but step by step during the authoring process.

5. Conclusion

In this paper, we have been presenting a generic, mod-ular framework that supports the development of personal-ized multimedia applications. The framework relieves ap-plication developers from the basic personalization tasks when developing new user-centered multimedia applica-tions. Due to the framework’s design, new data models, new composition operator etc. can be easily integrated into the system by implementing the respective new generic mod-ule only. The framework approach itself does not outrival other approaches in the field but rather embraces existing approaches in the field. The modular architecture of the framework allows to integrate and embed existing technol-ogy at al levels: new user profile models can be integrated by creating a new connector; the same applies to new me-dia storage and retrieval solutions. New, complex or appli-cation specific composition capabilities like an intelligent constraint solving system or a specific rhetorical construc-tion of a presentaconstruc-tion within a specific composiconstruc-tion operator can be integrated into the Multimedia Composition compo-nents. As the framework is independent of the presentation format a new or enhanced multimedia presentation format can be embedded with a new respective presentation format generator.

The framework provides a shelf of generic building blocks for personalization which can be employed and ad-justed to the specific needs. Consequently, providers of per-sonalized multimedia software can profit from such a multi-media software framework as is supports the modular, step-wise advancements of their applications; newly developed parts of the framework might be reused in different product line. By this more efficient creation of user-centered gener-ation of multimedia content applicgener-ations can be achieved.

References

[1] Amazon, Inc., USA. amazon.com, 1996-2003. URL: http://www.amazon.com.

[2] J. Ayars, D. Bulterman, A. Cohen, K. Day, E. Hodge, P. Hoschka, et al. Synchronized Multimedia Integration

Lan-guage (SMIL 2.0) Specification. W3C Recommendation,

URL: http://www.w3.org/TR/smil20/, Aug 07 2001. [3] S. Boll. Vienna 4 U - What Web Services can do for

person-alized multimedia applications. In Proceedings der Seventh

Multi-Conference on Systemics Cybernetics and Informatics (SCI 2003), Orlando, Florida, USA, July 27–30 2003.

[4] S. Boll, W. Klas, and J. Wandel. A Cross-Media Adapta-tion Strategy for Multimedia PresentaAdapta-tions. In Proc. of the

ACM Multimedia Conf. ’99, Part 1, pages 37–46, Orlando,

Florida, USA, November 1999.

[5] S. Boll, W. Klas, and U. Westermann. Multimedia Doc-ument Formats — Sealed Fate or Setting Out for New Shores? In Proc. of IEEE Int. Conf. on Multimedia

Com-puting and Systems (ICMCS’99), Florence, Italy, June 1999.

IEEE Computer Society.

[6] S. Boll, J. Kr¨osche, and C. Wegener. Paper chase revisited - a Real World Game meet Hypermedia (short paper). In

Proc. der Intl. Conference on Hypertext (HT03),

Notting-ham, United Kingdom, August 26–30 2003.

[7] P. D. Bra, P. Brusilovsky, and R. Conejo. Proc. of the

Sec-ond Intl. Conf. for Adaptive Hypermedia and Adaptive Web-Based Systems. Springer LNCS 2347, Malaga, Spain, 2002.

[8] P. Brusilovsky, A. Kobsa, and J. Vassileva. Adaptive

Hy-pertext and Hypermedia. Kluwer Acad. Publ., Dordrecht,

1998.

[9] M. J. F. Bes and F. Khantache. A Generic Architecture for Automated Construction of Multimedia Presentations. In

Intl. Conf. on Multimedia Modeling (MMM 2001),

Amster-dam, The Netherlands, Nov. 5-7 2001.

[10] J. Geurts, J. v.Ossenbruggen, and L. Hardman. Application-specific contstraints for Multimedia Presentation Genera-tion. In Intl. Conf. on Multimedia Modeling (MMM 2001), Amsterdam, The Netherlands, Nov. 5-7 2001.

[11] A. Girgehnsohn, S. Bly, F. Shipman, J. Boreczky, and L. Wilcox. Home Video Editing Made Easy - Balancing Automation and User Control. In Proc. of the

Human-Computer Interaction, pages 464–471, Tokyo, Japan, 2001.

[12] Macromedia, Inc. San Francisco, USA. Macromedia Direc-tor Shockwave 8, 2003. URL: http://www.macromedia.com. [13] Y. Sun, H. Zhang, L. Zhan, and M. Li. MyPhotos - A Sys-tem for Home Photo Management and Processing. In Proc.

of ACM Multimedia Conf. (ACMMM 2002), Juan-les-Pins,

France, Dec. 1-6 2002.

[14] J. van Ossenbruggen, F. Cornelissen, J. Geurts, L. Rutledge, and H. Hardman. Cuypers: a semi-automatic hypermedia presentation system. Techn. Rep. INS-R0025, CWI, The Netherlands, Dec. 2000.