Peer reviewed

Performance

m

etrics

in

c

linical

trials

Intertwining Quality

Management Systems

with Metrics to Improve

Trial Quality

Liz Wool, RN, BSN, CCRA, CMT

M

anaging quality in clinical trials is the focus of daily activities that,

per the Clinical Trials Transformation Initiative (CTTI), substantiate

the clinical research community’s ability “to effectively and efficiently

an-swer the intended question about the benefits and risks of a medical

prod-uct (therapeutic or diagnostic) or procedure while assuring protection of

human subjects.”

1At the Drug Information Association’s 2011 Annual Meeting, a presenter

from the European Medicines Agency (EMA) provided a description of

“quality” as “sufficient to support the decision-making process on medicines

throughout the clinical development and postmarketing authorization.”

2With the advent of increasing protocol complexities, technological

capabili-ties, and globalization of research, a prospective, systematic, and methodical

approach to ensuring quality in clinical trials is needed and is being advocated

by regulators. Additionally, both the Food and Drug Administration (FDA) and

EMA are collaborating with all stakeholders in clinical research to define and

describe the elements of quality by design (QbD) in the context of clinical

trial conduct. QbD incorporates the elements of a quality management system

with benchmarks to the International Conference on Harmonization (ICH) in

the ICH Q7 through Q10 documents and the International Organization for

Standardization’s ISO 9000 standards for quality management systems.

In May 2012, the EMA hosted a workshop that focused on a reflection

paper by the agency’s Good Clinical Practice (GCP) International Working

Group about risk-based quality management in clinical trials. The group

requested input from various stakeholders (including ACRP) on what the

QbD elements, context, and framework are for clinical trials. Similarly, the

FDA and CTTI are launching the QbD workstream in 2012.

As clinical researchers in the 21st century, stating that we have standard

operating procedures (SOPs) and training is not defining a quality management

system. This article describes a targeted review of a quality management

sys-tem that, when adhered to in its entirety, provides the organization with steps

for defining, planning, monitoring, measuring, and continuously improving

the quality of its work, leading to the inherent ability to identify, analyze, and

address possible performance issues through the appropriate use of metrics.

Quality Management System

Rather than focus solely on GCP compliance, SOPs, processes, forms, and

training, there needs to be a renewed focus on “building quality within an

organization” that inherently possesses the culture of quality.

3A quality

This article describes

a targeted review of a

quality management

system that provides

the organization

with the inherent

ability to identify,

analyze, and address

possible performance

issues through the

appropriate use of

metrics.

A quality management system

provides the prospective, systematic,

methodical, scientifically based

frame-work to plan, manage, monitor, and

measure the quality of the organization

and its performance throughout the

clinical trial and product development

lifecycles. Specifically, the quality

man-agement system establishes the

stan-dards under which work will be

per-formed and how the organization and

personnel perform and document their

assigned clinical trial activities, duties,

and functions. An additional

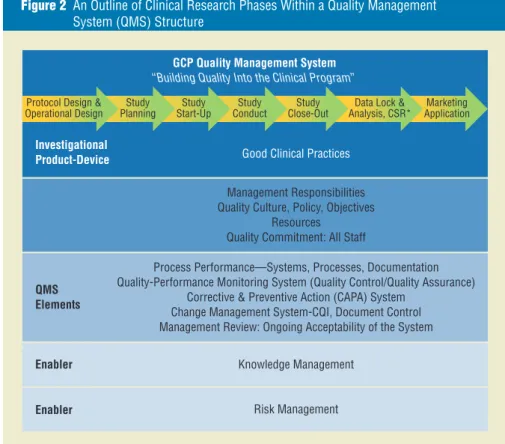

illustra-tion is noted in Figure 2, which outlines

the phases of product development for

clinical research benchmarking to

simi-lar illustrations for the ICH Q10

Phar-maceutical Quality System Guideline.

7This network system of interrelated

processes provides uniformity and

con-sistency for people and actions,

describ-ing the work performed and how the

work is documented, as outlined in

Fig-ure 3. In the clinical trials context, this

quality data obtained without

compro-mising the protection of human

sub-jects’ rights and welfare.

After implementing a quality

man-agement system, assessing, monitoring,

and measuring how well the

organiza-tion is performing to the established

standards and methods is required.

Using visual inspection and

confirma-tion, document review, data analytics

and review, and metrics, the

organiza-tion implements the foundaorganiza-tional

cor-nerstones for assessing its performance.

Elements of a Quality

Management System

The overarching framework for a

qual-ity management system is illustrated

in Figure 1, which visualizes the

criti-cal “plan, do, check, act” approach of

a committed organization to quality

through the development,

implemen-tation, and maintenance of a quality

management system.

management system sets out the

stan-dards to be achieved and the method to

meet them. The system should define

what people, actions, and documents

should be employed to conduct the

work in a consistent manner, leaving

evidence of what has happened. It may

include manuals, handbooks,

proce-dures, policies, records, and templates.

4In 1946, the International

Organi-zation for StandardiOrgani-zation (ISO) was

founded, and in 1987, it published

the first ISO 9000 standard for quality

management systems.

5Many people

believe that ISO 9000 focuses only

on manufacturing of products;

how-ever, ISO 9001:2008 was written such

that small businesses (e.g., consulting

firms) can implement ISO 9000 for

their organizations.

The ISO definition for quality states

that “the

quality

of something can be

determined by comparing a set of

inher-ent characteristics with a set of

require-ments.” With this in mind, this article will

discuss the quality management system

principles espoused in ISO 9000-9001,

extrapolating its use in the global

clini-cal research arena. Additional terms that

organizations may use to describe their

quality management system include

clinical quality system, integrated

qual-ity management, qualqual-ity management,

or total quality management.

From a practical standpoint, it is

important to understand how the

ele-ments and components of an

organiza-tion’s quality management system relate

to this article’s description of a quality

management system in order to perform

a comprehensive gap analysis. Due to

space limitations, alternative theories

and methods will not be discussed here.

Kleppinger and Ball, in their article

“Building Quality into Clinical Trials with

the Use of a Quality Systems Approach,”

describe the utility and application of

ISO 9000-9001 quality management

systems principles for clinical trial

plan-ning, execution, ongoing monitoring,

and continuous improvement during

the clinical trial lifecycle.

6The authors

assert that, even though a quality

sys-tem does not impose something totally

new on clinical research, a systematic

approach will produce a more reliable

and useful end product

—

that is,

high-Figure 1 Framework for a Quality Management System

Training Performance Dashboards Process Monitoring and Analyses Change Control Deviation Management Corrective and Preventive Action Program Process Improvement GCP Quality Assurance Unit, Annual Audit Plan Issue Escalation Management Responsibility, Management Review of QMS Metrics System Quality Management System (QMS) Framework Risk

Management Quality Policy, Quality Manual

Procedural Documents, Document

correctly per the standards outlined

in the monitoring visit report

com-pletion guidelines, monitoring visit

report template, monitoring plan, and

monitoring SOP? Did the CRA/monitor

capture critical issues, protocol

devia-tions, or violations in the monitoring

report as identified by either database

review of deviation listings or during

an onsite quality assessment visit that

was performed by his or her supervisor

or the sponsor? Additional examples

are described in Table 2.

Upon identification of a metric that

has either met or is out of range of the

predetermined tolerance limits, the next

execution of the clinical trial to the

established quality standards.

Specifi-cally, quality metrics provide the

abil-ity to measure progress to the standards

and goals defined by the organization.

Quality metrics are reported as the

“error rate,” and require predefined

tolerance limits for effective

moni-toring, measurement, and reporting

of quality and compliance signals to

the organization. Note that what most

organizations refer to as “key quality

indicators” are a subset of performance

indicators. For example, a site

moni-toring report may be completed on

time; however, is the report completed

framework establishes a reliable network

of commitments throughout the

organi-zation and business enterprise, which

each employee of and contributor to the

research site knows and understands and

to which everyone performs. Thereby,

the organization or business establishes,

maintains, and manages this network of

commitments, which supports building

quality into the clinical trial practices.

8A quality management

systems approach in

clinical research is a

further extension of

delivering quality care

for those patients who

volunteer to participate

in clinical research.

Inherent in a quality management

system is documenting the work

per-formed, evaluating deviations from the

established quality standards and

con-trols, and taking the necessary actions

for immediate and continuous process

improvement. This framework for

qual-ity is not so different from that used in

hospitals and medical institutions, which

must obtain and maintain accreditation

of their facility, per country/state

require-ments. Therefore, a quality management

systems approach in clinical research is

a further extension of delivering quality

care for those patients who volunteer to

participate in clinical research.

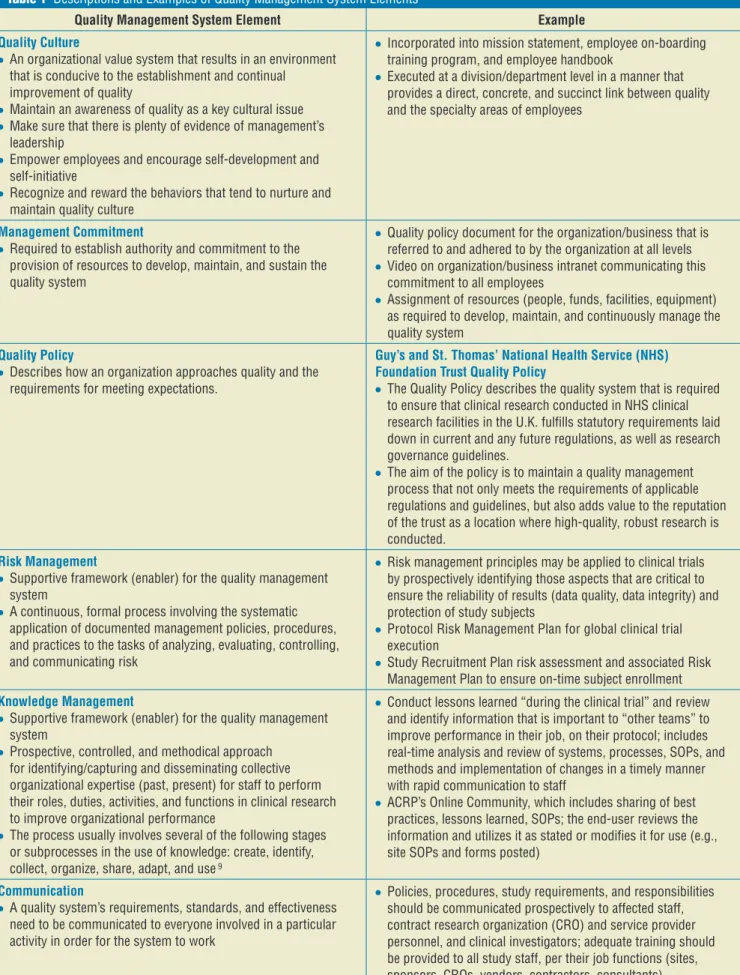

Table 1 presents a targeted

descrip-tion and applicadescrip-tion (examples) of

crit-ical aspects of both the quality

man-agement system framework and the

plan, do, check, act principles.

Metrics: Reporting

Performance of the Quality

Management System

When defined and used appropriately,

metrics are an effective tool for

moni-toring and measuring the performance

of an organization’s quality system and

Figure 3 The Network of Interrelated Processes

Figure 2 An Outline of Clinical Research Phases Within a Quality Management System (QMS) Structure

Marketing Application Data Lock &

Analysis, CSR*

GCP Quality Management System

“Building Quality Into the Clinical Program” Protocol Design &

Operational Design

Enabler Enabler

Good Clinical Practices

Management Responsibilities Quality Culture, Policy, Objectives

Resources Quality Commitment: All Staff

Process Performance—Systems, Processes, Documentation Quality-Performance Monitoring System (Quality Control/Quality Assurance)

Corrective & Preventive Action (CAPA) System Change Management System-CQI, Document Control Management Review: Ongoing Acceptability of the System

Knowledge Management Risk Management

Study

Planning Start-UpStudy ConductStudy Close-OutStudy

Investigational Product-Device

QMS Elements

People Work

Network of interrelated processes with each process made up of:

Supplies, Tools, Equipment Reports, Materials Resources, Rules, Regulations Records, Documents, Forms Activities, Tasks

Table 1 Descriptions and Examples of Quality Management System Elements

Quality Management System Element Example

Quality Culture

●

● An organizational value system that results in an environment

that is conducive to the establishment and continual improvement of quality

●

● Maintain an awareness of quality as a key cultural issue ●

● Make sure that there is plenty of evidence of management’s

leadership

●

● Empower employees and encourage self-development and

self-initiative

●

● Recognize and reward the behaviors that tend to nurture and

maintain quality culture

●

● Incorporated into mission statement, employee on-boarding

training program, and employee handbook

●

● Executed at a division/department level in a manner that

provides a direct, concrete, and succinct link between quality and the specialty areas of employees

Management Commitment

●

● Required to establish authority and commitment to the

provision of resources to develop, maintain, and sustain the quality system

●

● Quality policy document for the organization/business that is

referred to and adhered to by the organization at all levels

●

● Video on organization/business intranet communicating this

commitment to all employees

●

● Assignment of resources (people, funds, facilities, equipment)

as required to develop, maintain, and continuously manage the quality system

Quality Policy

●

● Describes how an organization approaches quality and the

requirements for meeting expectations.

Guy’s and St. Thomas’ National Health Service (NHS) Foundation Trust Quality Policy

●

● The Quality Policy describes the quality system that is required

to ensure that clinical research conducted in NHS clinical research facilities in the U.K. fulfills statutory requirements laid down in current and any future regulations, as well as research governance guidelines.

●

● The aim of the policy is to maintain a quality management

process that not only meets the requirements of applicable regulations and guidelines, but also adds value to the reputation of the trust as a location where high-quality, robust research is conducted.

Risk Management

●

● Supportive framework (enabler) for the quality management

system

●

● A continuous, formal process involving the systematic

application of documented management policies, procedures, and practices to the tasks of analyzing, evaluating, controlling, and communicating risk

●

● Risk management principles may be applied to clinical trials

by prospectively identifying those aspects that are critical to ensure the reliability of results (data quality, data integrity) and protection of study subjects

●

● Protocol Risk Management Plan for global clinical trial

execution

●

● Study Recruitment Plan risk assessment and associated Risk

Management Plan to ensure on-time subject enrollment Knowledge Management

●

● Supportive framework (enabler) for the quality management

system

●

● Prospective, controlled, and methodical approach

for identifying/capturing and disseminating collective organizational expertise (past, present) for staff to perform their roles, duties, activities, and functions in clinical research to improve organizational performance

●

● The process usually involves several of the following stages

or subprocesses in the use of knowledge: create, identify, collect, organize, share, adapt, and use 9

●

● Conduct lessons learned “during the clinical trial” and review

and identify information that is important to “other teams” to improve performance in their job, on their protocol; includes real-time analysis and review of systems, processes, SOPs, and methods and implementation of changes in a timely manner with rapid communication to staff

●

● ACRP’s Online Community, which includes sharing of best

practices, lessons learned, SOPs; the end-user reviews the information and utilizes it as stated or modifies it for use (e.g., site SOPs and forms posted)

Communication

●

● A quality system’s requirements, standards, and effectiveness

need to be communicated to everyone involved in a particular activity in order for the system to work

●

● Policies, procedures, study requirements, and responsibilities

should be communicated prospectively to affected staff, contract research organization (CRO) and service provider personnel, and clinical investigators; adequate training should be provided to all study staff, per their job functions (sites, sponsors, CROs, vendors, contractors, consultants)

Table 1 Descriptions and Examples of Quality Management System Elements (continued)

Quality Management System Element Example

Job Responsibilities and Assessment of Personnel Competencies

●

● Job descriptions are present and current, and there is a

methodology for continuously reviewing and updating them according to changes in the regulatory landscape and other job responsibilities

●

● Do personnel possess competencies, knowledge, experience,

skills, and training to execute their assigned responsibilities, duties, functions, and activities?

●

● Each position has a current and documented job description,

employee/contractor training plan, and training file

Document Control

●

● Document control is a consistent method of controlling

documents that includes version numbering, dating, issuing, and withdrawing as controlled procedures

●

● Ensures that only the current version of the procedural

document is used by all personnel

●

● Procedural documents include SOPs, informed consent

templates, and investigational product accountability logs, etc.

Quality Plans

●

● Documents specifying which procedures and associated

resources shall be applied, by whom, and when to a specific project, product, process, or contract; describes how the quality system is applied to a specific deliverable

●

● Protocol-Specific Quality Management Plan ●

● Monitoring Plan, Data Management Plan, Project Plan,

Recruitment Plan, Quality Oversight Plan of Third Parties Plan, Data Monitoring Committee Charter, Data-Safety Monitoring Plan, Annual Audit Plan

Quality Standards

●

● Organizational standards/requirements for conducting

business

●

● Regulations, guidances, guidelines, regulatory authority

inspection manuals

●

● Protocol-specific requirements for endpoint assessments (i.e., by

an MD or certified assessor), and other study-related procedures/ activities

●

● Each informed consent is obtained prior to any study-specific

procedures performed on the subject

●

● Protocol (e.g., subject enrollment criteria) ●

● Trial-specific procedures and expectations (e.g., correct data

entry per the source documentation into electronic data capture)

●

● Predefined requirements (e.g., temperature at which

investigational product must be stored) Quality Control

●

● A set of activities intended to ensure that quality requirements

are actually being met

●

● Organizational controlled, procedural documents (e.g., SOPs

[unblinding, randomization], templates, forms, job aids [flow charts, reference cards, business operations manuals])

●

● Procedural documents include: SOPs, informed consent

templates, investigational product accountability logs

●

● Sponsor’s clinical research associate (CRA)/monitor perform

onsite monitoring visits to a clinical investigator

●

● Refrigerator and freezer temperature is routinely checked/monitored

to ensure the temperature is within specified limits (requirements); this routine check/monitoring is documented in the temperature log Monitoring and Measurement of the Quality System

●

● Organization monitors customers’ perceptions of whether it

has met their requirements

●

● Suitable methods are utilized to monitor and measure the

organization’s performance

●

● Data analyses of performance (predefined/predetermined

metrics and tolerance limits)

●

● Customer report of product quality complaints ●

● Internal audits of the quality system (see quality assurance

section) Facilities and Equipment

●

● Controlled environments required to execute the protocol

●

● Clinical site’s refrigerators/freezers possess the

protocol-required temperature range with documented evidence of ongoing monitoring, calibration, and maintenance per manufacturer specifications

Quality Assurance

●

● The aspect of quality management that focuses on the

confidence that quality requirements are fulfilled

●

● Self-inspection audits of the quality system, processes,

activities, and documents to independently assure that the defined requirements/standards for the protocol/system/ process/procedure have been adhered to

●

● Conducted by defined, qualified personnel independent of the

activity; performed in a systematic manner

●

● Organization’s routine audit of the quality system ●

● Clinical study report audit ●

● Clinical investigator site audit ●

● Quality systems audit of vendor/third party ●

● Trial Master File audit

3. Which aspects of the system are

performing adequately?

4. What is ideal performance?

5. Are the improvements having

the desired effect?

Figure 4 presents some useful

guide-lines for successfully using and

evalu-ating quality systems metrics.

10Summary

Effective execution of clinical trials

featuring “quality built within” requires

assumptions reference in the

develop-ment of the risk managedevelop-ment plan and

revise that plan accordingly? Is this an

issue we did not expect, thereby

neces-sitating the need for the development

of a new risk management plan?

Quality systems metrics

analy-sis focuses on the following critical

questions:

101. How is the system performing?

2. Which aspects of the system

are performing poorly or need

improvement?

step is the evaluation of the metric and

any relevant companion metrics. This

analysis supports a robust and

compre-hensive root cause analysis as to what

the issue is, the issue’s impact, and what

corresponding directed and focused

CAPA steps and continuous

improve-ment measures should be taken.

Further, metrics need to be

evalu-ated with the associevalu-ated risk

manage-ment plan and actions analyzed: Did

our mitigation plans work? Should we

implement our predefined contingency

plans? Do we need to reevaluate the

Quality Management System Element Example

Deviation Management

●

● Supports learning in the organization through the identification,

recording, and investigation of activities that are not performed correctly or as planned, and provides the framework on how to investigate, plan, and change the way activities are performed

●

● Protocol deviation log maintained by the site and by CRAs/

monitors

Corrective and Preventive Action (CAPA) Program

●

● Corrective action aims to address and manage identified areas

of noncompliance and nonconformity (an issue or problem) by investigating the “root cause” in order to accurately eliminate it

●

● Preventive actions aim to establish proactive methods/steps/

actions to foresee any issues and to prevent them from occurring

●

● Incorrect version of the informed consent used for a study

subject (corrective action: current version of consent in the file)

●

● Subject’s investigational product dose not adjusted per the

results of their liver or renal values, as required by the protocol (preventive action: checklist, per patient visit, outlining requirements)

Continuous Improvement

●

● The organization shall continually improve the effectiveness

of the quality system through the use of the quality policy, quality objectives, audit results, analysis of data, CAPA program, and management review

●

● Build on the knowledge “known” and “learned” to make

proactive improvements in individual trials and across all trials, and in the business enterprise (close correlation to knowledge management for the organization)

●

● Use of audit findings, audit conclusions, analysis of data,

management reviews, and deviation management to improve the quality system/organization

●

● CAPA plans implemented as a result of audit conclusions, audit

findings, internal monitoring of systems/processes and quality system by staff, CRA/site monitor feedback

Issue Escalation

●

● The issue escalation process describes how the project

identifies, tracks, and manages issues and action items that are generated throughout the project life cycle; it also defines how to escalate an issue to a higher level of management for resolution and how resolutions are documented

●

● Unanticipated issues and action items are assigned to a

specific person for action and are tracked to resolution

●

● Cases of suspected scientific/ethical misconduct and/or

fraud are escalated within 24 hours to the compliance/quality assurance department and senior management for investigation

Management Review of the Quality System’s Performance

●

● Senior management’s overarching responsibility for review

and analysis, at predetermined intervals, of the functioning/ adequacy of the quality system utilizing key indicators of performance, quality, and revenue

●

● Has the quality system provided management with

the information to reassure them of compliance to the organization’s quality system?

●

● Through the assessment of metrics (performance, quality

indicators), does the organization need to add anything or change anything in the quality system to meet organizational quality objectives?

●

● Biannual meeting and review of the quality system utilizing

metric reports and performance dashboards Table 1 Descriptions and Examples of Quality Management System Elements (continued)

References

1. Clinical Trials Transformation Initiative. Available at https://www.trialstransforma tion.org/scope.

2. Sweeney F. 2011. Defining Quality in Clinical Trials. DIA Annual Meeting, 2011.

3. Cameron K, Since W. 1999. A framework for organizational quality culture. Quality

Man-agement Journal, 1999, pp. 7–25.

4. BARQA. 2010. Quality Systems Workbook.

Available at www.barqa.org (free download

available).

5. International Organization for

Standardiza-tion; www.iso.org.

6. Kleppinger C, Ball L. 2010. Building quality into clinical trials with use of a quality sys-tems approach. Clinical Infectious Disease

Journal 51(Supp. 1): S111-S116.

7. ICH Q10 Pharmaceutical Quality System

pre-sentation, www.ich.org/products/guidelines

/quality/quality-single/article/pharmaceutical -quality-system.html.

8. Burrow D. CDER BIMO Warning Letters as Case Studies-Building Quality in Clinical Tri-als. Presentation, ACRP Global Conference, 2012.

9. American Productivity and Quality Center Publication. 2000. Stages of

Implementa-tion: A Guide for Your Journey to Knowledge Management Best Practices. Houston, Texas.

10. Zuckerman D. 2006. Pharmaceutical R & D Metrics. Gower Publishing Ltd., England.

Additional Sources

Cianfrani C, Tsikais J, West J. 2009. ISO 9001:

2008, Explained. Milwaukee, Wis.: ASQ

Quality Press.

Clinical Trials Transformation Initiative.

Develop-ing Effective Quality Systems in Clinical

Tri-als: An Enlightened Approach; www.ctti.org.

ISO 9001:2008. Quality Management Systems—

Requirements; www.iso.org.

Ribière V, Khorramshahgol R. 2004. Integrating total quality management and knowledge management. Journal of Management Sys-tems 16(1).

Toth-Allen J. 2012. Building Quality into Clinical Trials: An FDA Perspective. FDA Webinar, 04 May 2012.

Tricker R. 2010. ISO 9001:2008 for Small

Busi-nesses. Burlington, Mass.: Elsevier.

Liz Wool, RN, BSN, CCRA, CMT, has 22 years of experience in the clinical research industry. She is presi-dent and CEO of QD-Quality and Training Solutions, Inc. (QD-QTS), a clinical quality systems, training, auditing, and CRO-vendor oversight consulting firm providing ser-vices to institutions, investigators, sponsors, and CROs. QD-QTS has offices in San Bruno, Calif., and Franklin, Tenn. A certified Master Trainer and instructional designer, she is also a member of ACRP’s Association Board of Trustees and Editorial Advisory Board. She can be reached at lizwool@qd-qts.com.

ity management system principles

described in this article represent the

framework and components of

progres-sive 21st century practices for use by

all stakeholders in the clinical research

enterprise.

a prospective, systematic approach to

quality through the implementation

of a robust and comprehensive

qual-ity management system whereby

met-rics provide the ability to measure an

organization’s performance. The

qual-Figure 4 Guidelines for Using and Evaluating Quality Systems Metrics Successfully Using Quality Systems Metrics

●

● Identify the stakeholders and the metrics for their activities ●

● Determine the metrics required for periodic management review of the quality

system

●

● Determine how the metrics will change the way you perform your business ●

● Select the right metrics and rationalize the calculation rules (do you have this

requisite expertise inhouse?)

●

● Determine how you will use the results ●

● Define the reporting mechanisms (scorecard, dashboard, real-time reports) and

issue escalation pathways both internally and externally with other parties

●

● Determine the right source of the data ●

● Collect the data and validate the results ●

● Continuously evaluate the metrics (obtain feedback and study the utility of your

measurement)

●

● Continuously improve the process ●

● Communicate the results

Successfully Evaluating Quality Systems Metrics

●

● What does this metric measurement mean? ●

● What will I do with this information? ●

● How will this communicate a “quality performance” threshold or tolerance limit

requiring investigation or evaluation?

●

● Do I need another metric to get the “whole picture”? ●

● Identify any “companion metrics” to assist with the evaluation

Table 2 Examples of How Quality Indicators are Subsets of Performance Indicators

Performance Indicator Quality Indicator

Case report forms (CRFs) completed and submitted on time > 90%

CRF query rate < 5% Imaging study completed on time >95% Image readability > 98% Blood samples collected on time > 95% Quantity not sufficient < 1% Staff trained on SOPs prior to performing

responsibilities/tasks in SOP > 95% SOP deviation rate < 3% Staff trained to the protocol-investigational

plan prior to performing delegated study tasks > 95%

Protocol deviations-violations < 2%

Site staff delegated tasks prior to study-start > 95%

Staff delegated responsibilities correctly per licensure and/or certification requirements per state/country 100%

Subjects enrolled on time > 85% Subjects enrolled meet enrollment criteria 100%

Informed consent obtained 100% Subjects consented with the correct ICF

version 100%

Informed consent obtained 100% Subjects consented prior to study-specific