ISSN(Online): 2320-9801

ISSN (Print) : 2320-9798

I

nternational

J

ournal of

I

nnovative

R

esearch in

C

omputer

and

C

ommunication

E

ngineering

(A High Impact Factor, Monthly, Peer Reviewed Journal)

Website: www.ijircce.com

Vol. 6, Issue 8, August 2018

American Sign Language Perception and

Translation in Speech

Nihal Mehta

Graduate Student, Department of Computer Engineering, Sinhgad Academy of Engineering, Pune, India

ABSTRACT: For human interaction, communication plays a fundamental role, but sometimes it becomes inconvenient, problematic and expensive for deaf-mute people to communicate with non-ASL speakers. The aim of this paper is to bridge the barrier between people with inability to speak and Non-ASL speakers to communicate conveniently. In the current work, we developed a smart glove which detects gestures using flex sensors and accelerometer/gyro-meter to detect the motion of hand in 3-dimensional space. The experimental result implies that system is accurate and cost effective. The gestures are then mapped to a database of supervised data using KNN algorithm to recognize English alphabets, numbers and basic sentences.

KEYWORDS: ASL; flex sensor; Atmega328; Tactile sensor; Accelerometer; Gesture recognition module; Text-to-speech synthesis module

I. INTRODUCTION

American Sign Language is a language which chiefly uses manual communication to convey meaning, as opposed to acoustically conveyed sound patterns. They should not be confused with body language, which is a kind of non-linguistic communication. Wherever communities of deaf people exist, sign languages have developed, and are at the cores of local deaf cultures.

Although signing is used primarily by the deaf, it is also used by others, such as people who can hear, but cannot physically speak.

American Sign Language (ASL) is the predominant sign language of Deaf communities in the United States and most of Anglophone Canada. In 2013 the White House published a response to a petition that gained over 37,000 signatures to officially recognize American Sign Language as a community language and a language of instruction in schools. The response is titled "there shouldn't be any stigma about American Sign Language" and addressed that ASL is a vital language for the Deaf and hard of hearing. Stigmas associated with sign languages and the use of sign for educating children often lead to the absence of sign during periods in children's lives when they can access languages most effectively.

In previous works, gestures were recognised with the help of vision based image processing. The issue of this system was that they required too many advanced processing algorithms.

This project finds a solution through the development of a prototype device to practically bridge the communication gap between people knowing and not knowing ASL in a way that improves on the methods of pre-existing designs. The team aims to develop a glove with various electronic sensors to sense the flexing, rotation, and contact of various parts of the hands and wrist, as used in ASL signs.

Fig. 1 American Sign Language (ASL) [4]

II. RELATED WORK

In 1999, the first attempt to solve the problem described a simple glove system that can learn to decipher sign language gestures and output the associated words. Since then, numerous teams, mainly undergraduate or graduate university projects, have created their own prototypes, each with its own advantages and design decisions. [1]

Almost two decades later, the idea of an instrumented glove was designed for sign language translation. A US Patent filed under the title “Communication system for deaf, deaf-blind, or non-vocal individuals using instrumented glove” is one of the first attempts found to use sensors in a glove to facilitate communication between hearing and non-hearing people.

In 2014, Monica Lynn and Roberto Villalba of Cornell University created a sign language glove for a final project in a class. Their device is “a glove that helps people with hearing disabilities by identifying and translating the user’s signs into spoken English”. It uses five Spectra Symbol flex-sensors, copper tape sensors, an MPU-6050 three-axis gyroscope, an ATmega1284p microcontroller and a PC. The microcontroller organizes the input into USB communications sent to the PC. Their machine learning algorithm is trained through datasets to calibrate the glove [1].

The most recent development in sign language translation came from engineering students at Texas A&M University in 2015.

As with the Cornell glove, the need for a computer limits the use of this device to non-everyday situations. Furthermore, although the extensive use of sensors throughout the arm is helpful for providing a more complete understanding of a signed gesture, so many components would very likely be too cumbersome to be used and too expensive.

III. PROPOSED ALGORITHM

ISSN(Online): 2320-9801

ISSN (Print) : 2320-9798

I

nternational

J

ournal of

I

nnovative

R

esearch in

C

omputer

and

C

ommunication

E

ngineering

(A High Impact Factor, Monthly, Peer Reviewed Journal)

Website: www.ijircce.com

Vol. 6, Issue 8, August 2018

Fig. 2 Block Diagram [1]

The steps involved in sign language to speech conversion are described as follows:

Step1: The flex sensors are mounted on the glove and are fitted along the length of each of the fingers along with tactile sensor on the tip of each finger.

Step2: Depending upon the bend of hand movement different signals corresponding to x-axis, y-axis and z-axis are generated.

Step3: Flex sensors outputs the data stream depending on the degree and amount of bend produced, when a sign is gestured.

Step4: The output data stream from the flex sensor, tactile sensor and the accelerometer are fed to the Arduino microcontroller, where it is processed and then converted to its corresponding digital values.

Step5: The microcontroller unit will compare these readings with the pre-defined threshold values and the corresponding gestures are recognized and the corresponding text is displayed.

Step6: The text output obtained from the sensor based system is sent to the text-to-speech synthesis module.

Step7: The TTS system converts the text output into speech and the synthesized speech is played through a speaker. [2]

A. Sensor System

The sensor system is made up of three main components: the flex sensor circuits, the contact sensor circuits, and the gyroscope. Each type of sensor will provide its own unique data to the processor so that the processor can recognize subtle differences between gestures. Each flex sensor will be set up in a voltage divider circuit so that it may be processed accurately by the microprocessor

1. Flex Sensors

Flex sensors are resistive carbon parts. When bent, the device develops a resistance output correlative to the bend radius. The variation in resistance is just about 10kΩ to 30kΩ. A global organization flexed device has 10kΩ resistance and once bent the resistance will increase to 30kΩ at 90o.The device incorporates within the device employing a potential divider

Fig.3. Equivalent circuit of flex sensor [5]

2. Tactile Sensors

A tactile sensor is a device that measures information arising from physical interaction with its environment. Tactile sensors are generally modeled after the biological sense of cutaneous touch which is capable of detecting stimuli resulting from mechanical stimulation, temperature, and pain (although pain sensing is not common in artificial tactile sensors). A resistive contact sensor was fixed to the tip of each finger, to measuring contact against the fingers. It is important for gesture recognition, as touch is one of the key mechanics of ASL gestures.

Fig.4. Tactile sensor

3. Accelerometer

Accelerometer within the Gesture Vocalized system is employed as a tilt sensing element, which checks the tilting of the hand.ADXL103 measuring system as shown in Figure 5. The tip product of the measuring system is provided to 3rd module, which incorporates pipeline structure of 2 ADC. There’s a technical issue at this stage of the project, that the analog output of the measuring system, that ranges from one.5 volts to three.5 volts to a digital 8-bit output the systems, becomes terribly sensitive. If a lot of sensitive system is employed, then there's a large modification within the digital output with the little tilt of the script that is troublesome to be done by.

Fig.5. Block diagram of accelerometer sensor

4. Arduino

ISSN(Online): 2320-9801

ISSN (Print) : 2320-9798

I

nternational

J

ournal of

I

nnovative

R

esearch in

C

omputer

and

C

ommunication

E

ngineering

(A High Impact Factor, Monthly, Peer Reviewed Journal)

Website: www.ijircce.com

Vol. 6, Issue 8, August 2018

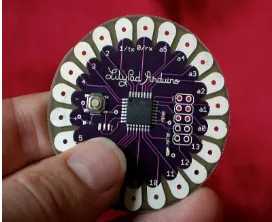

the ATmega32u4. It has 9 digital input/output pins (of which 4 can be used as PWM outputs and 4 as analog inputs), an 8 MHz resonator, a micro USB connection, a JST connector for a 3.7V LiPo battery, and a reset button. It contains everything needed to support the microcontroller; simply connect it to a computer with a USB cable or power it with a battery to get started.

Fig. 6 Arduino Lilypad

To process the incoming signals, each analog signal from the flex sensors must be converted to a digital signal. This means that to process all eight of the flex sensors, eight channels of ADC are required. Most microcontrollers come with some analog input pins, but this number is limited and can quickly crowd up the microcontroller. The addition of ADCs can be used to add more analog pins to the overall processing system.

B. Software Module

1. K Nearest Neighbors Classifier

K-nearest neighbor (k-NN or KNN) Algorithm is a method to perform the classification of objects based on data that is distance learning closest to the object. Learning data is projected onto the many-dimensional space, where each dimension represents the features of the data. The space is divided into sections based on the learning data classification. A point in this space marked class c if class C is the most common classification of the k nearest neighbors that point. Near or far neighbors are usually calculated based on Euclidean distance. If p = (p1, p2) and p = (q1, q2) then the distance Euclidean the two points are:

d(p,q)=

√(p1

-q1)

2(p2-q2)

2Description:

p1: position of actual data on x-axis. q1: position of actual data on y-axis. p2: position of reference data on x-axis. q2: position of reference data on y-axis.

d : distance between actual data and reference data

The given equation is the Euclidean distance formula between two points.

2. Application of KNN in Gesture Recognition

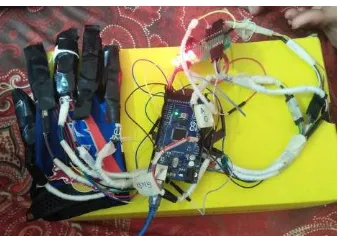

Fig.7 Set of supervised cluster for alphabet B. Fig. 8 Prototype built for the project.

C. Output System

This system is the final step in implementing translation. After the gesture has been recognized, the output will transmitted to a base station via Bluetooth. Then, the recognized gesture will be played on the base stations speakers via a text to speech module on the so that people can understand the gesture.

IV.CONCLUSION AND FUTURE WORKS

The team has succeeded in building a design necessary for the project. However, more can be done to improve the device’s accuracy, form, and other important specifications.

To help shape the device into something more slim and comfortable, the team suggests a few improvements to the prototype. Primarily, the team suggests designing a smaller and more compact PCB, which will more efficiently combine the components of the system. The team also recommends using conductive fabric to possibly replace the wiring, the contact sensors, or the flex sensors to allow for less bulky wiring and more conforming, lightweight connections. Slimmer battery supplies which may provide the required voltage and hopefully enough power to last for at least 5 hours would also be a valued improvement to the prototype.

The software used in the algorithm has not been finely tuned. There are many optimizations which may be possible. Testing with actual ASL users to determine how well the user requirements and design specifications were followed would allow for a more accurate library of gestures and a more practical product.

As a final remark, the team is excited about the progress made in the development of the glove prototype. Although it is not a finished product, it shows that using a glove outfitted with sensors, a microcontroller, and wireless communications can be used to translate ASL signs. It satisfies all of the major requirements put forth by the team, and it may lead to further developments in translation devices. With increased attention to the challenge of ASL translation, the team hopes the communication gap between ASL users and the hearing may soon be diminished.

REFERENCES

[1] Prototyping a Portable, Affordable Sign Language Glove by Princesa Cloutier ,Alex Helderman and Rigen Mehilli.

[2] Aarthi M, Vijayalakshmi P, “SIGN LANGUAGE TO SPEECH CONVERSION” , 2016 FIFTH INTERNATIONAL CONFERENCE ON RECENT TRENDS IN INFORMATION TECHNOLOGY

[3] Mr. Jenyfal Sampson, Keerthiga V. , Mona P. , Rajha Laxmi A. , “Hetero Motive Glove For Hearing And Speaking Impaired Using Hand Gesture Recognition. ” International Journal Of Engineering Research in Electronics And Telecommunication Engineering (IJERECE).

[4] Finger Spelling Digital Image.http://www.lifeprint.com/asl101/fingerspelling/images/abc1280x960.png

[5] https://itp.nyu.edu/archive/physcomp-spring2014/sensors/Reports/Flex.html

[6] Roman Akozak www.romanakozak.com/signlanguagetranslator

[7] D. Quick. (2012, July 12). “Sign language-to-speech translating gloves take out Microsoft Imagine Cup 2012” [Online]. Available: http://www.gizmag.com/enabletalk-sign-language-gloves/23268/

[8] Ravikiran, J., Kavi, M., Suhas, M., Sudheender, and S., Nitin, V. “Finger Detection for Sign Language Recognition”, Proceedings of the International multi conference of engineers and computer scientists, Vol. 2, No. 1, pp. 234-520,2009.

[9] Vinod J Thomas, Diljo Thomas, “A Motion based Sign Language Recognition Method”, International Journal of Engineering Technology vol 3 Issue 4, ISSN 2349-4476, Apr 2015.

![Fig. 1 American Sign Language (ASL) [4]](https://thumb-us.123doks.com/thumbv2/123dok_us/1381425.1170819/2.595.206.432.186.370/fig-american-sign-language-asl.webp)

![Fig. 2 Block Diagram [1]](https://thumb-us.123doks.com/thumbv2/123dok_us/1381425.1170819/3.595.179.432.156.349/fig-block-diagram.webp)