Fidelity metrics for animation

Full text

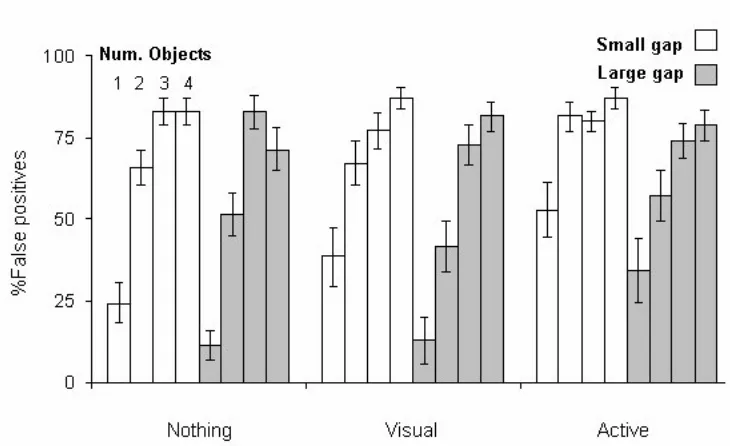

Figure

Related documents

Reporting. 1990 The Ecosystem Approach in Anthropology: From Concept to Practice. Ann Arbor: University of Michigan Press. 1984a The Ecosystem Concept in

There are eight government agencies directly involved in the area of health and care and public health: the National Board of Health and Welfare, the Medical Responsibility Board

morphological and morphometric characters, reproductive compatibility and esterases separated by electrophoresis, was analyzed in several strains of two common Trichogramma species

The summary resource report prepared by North Atlantic is based on a 43-101 Compliant Resource Report prepared by M. Holter, Consulting Professional Engineer,

The Lithuanian authorities are invited to consider acceding to the Optional Protocol to the United Nations Convention against Torture (paragraph 8). XII-630 of 3

Insurance Absolute Health Europe Southern Cross and Travel Insurance • Student Essentials. • Well Being

A number of samples were collected for analysis from Thorn Rock sites in 2007, 2011 and 2015 and identified as unknown Phorbas species, and it initially appeared that there were

[r]