Wearable and mobile devices

Full text

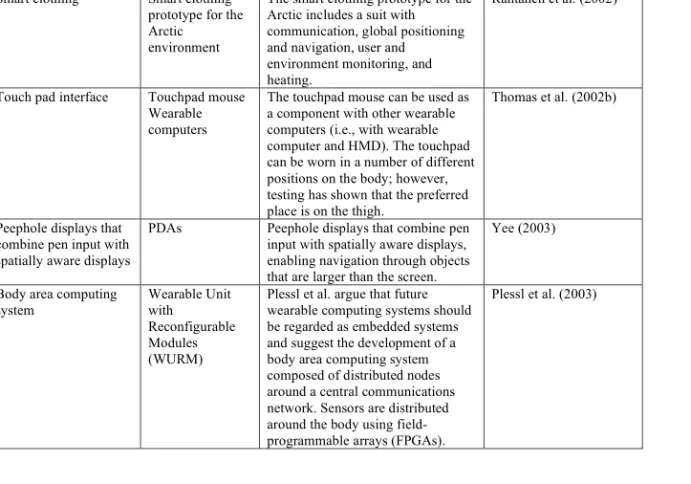

Figure

Related documents

In order to achieve this aim, the following objectives of the research have been set: (1) to adapt the mobbing diagnosis instrument of the employees’ relations; (2) to conduct surveys

Aim: This prospective observational randomized study was to investigate the likelihood of using the salivary luteinizing hormone (LH), free testosterone (FT) and

While there are many treatment options and approaches for perforation of the esophagus, early recognition of sus- picious symptoms and signs by the emergency room phy- sician is

According to the expenses list and the annual reports for the Zileten Cement Factory; the next table illustrates the quantity emission (MP LD ), raw material (RM 1 ) and the

Due to program demand and the volume of preprogram preparation, cancellations or deferrals received 14 to 30 days before the program start date are subject to a fee of one-half of

In the first workshop all but two of the 13 participants were very concerned that having all their information in one place, and accessing other bank accounts, would lead to a lack

From the observed microstructure, the joints fabricated at the condition with the tool rotation speed of 1120rpm, weld speed of 80 mm/min and tool tilt angle of 2.5 0

Try Scribd FREE for 30 days to access over 125 million titles without ads or interruptions. Start