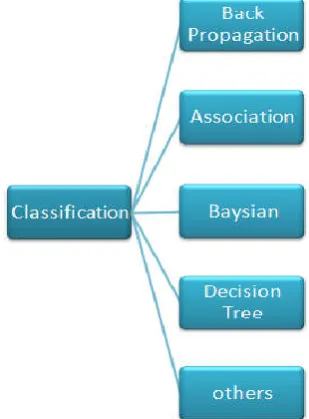

Diabetes data prediction using data classification algorithm

Full text

Figure

Related documents

An algorithm has been developed on the basis of the conversion of MODIS narrowband radiances to broadband flux to derive the shortwave upward flux above clouds.. To solve

Potentially many kinds of coherence could be realized in health curriculum policies such as: (a) a sense of shared purpose or shared approaches between or among health

pediatrics as a response to house staff interests and also in re- sponse to the needs of the surrounding community. This in- terest in primary care is perhaps even more evident

We have identified an unconventional monetary policy shock affecting the central bank’s balance sheet in three different ways; through the size of the balance sheet, based on

“Disability” means, unless otherwise set forth in an Award Agreement or other written agreement between the Company, the Partnership, the General Partner or one of their Affiliates

In the paper [9] by Deisy.C et al experimented breast cancer data using three feature selection methods Fast correlation based feature selection, Multi thread

as late CR attenders and median wait times in each patient group exceeded maximum recommendations on wait time.. One explanation for the higher proportion of late atten- ders may

However, realizing the potential, some innovative custodians are focusing on offering specialized services and creating custom solutions for custody of alternative assets