EMC XTREMIO WORKLOAD CONSOLIDATION

AND COPY MANAGEMENT FOR MICROSOFT

SQL SERVER

•

Virtualize and consolidate OLTP and OLAP instances with consistent

performance

•

Reduce SQL Server storage footprint with XtremIO inline data

reduction capabilities

•

Simplify DevOps with EMC AppSync management for XtremIO Virtual

Copies

EMC Solutions

AbstractThis white paper describes the operational advantages of virtualized Microsoft SQL Server 2012 and 2014 databases deployed on an EMC® XtremIO® all-flash array, and demonstrates how the solution enhances the capabilities of SQL Server database-dependent environments.

Copyright © 2015 EMC Corporation. All rights reserved. Published in the USA. Published December 2015

EMC believes the information in this publication is accurate as of its publication date. The information is subject to change without notice.

The information in this publication is provided as is. EMC Corporation makes no representations or warranties of any kind with respect to the information in this publication, and specifically disclaims implied warranties of merchantability or fitness for a particular purpose. Use, copying, and distribution of any EMC software described in this publication requires an applicable software license.

EMC2, EMC, AppSync, Avamar, Connectrix, X-Brick, XtremIO, and the EMC logo are registered trademarks or trademarks of EMC Corporation in the United States and other countries. All other trademarks used herein are the property of their respective owners.

For the most up-to-date listing of EMC product names, see EMC Corporation Trademarks on EMC.com. EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

White Paper

Part Number H14673

2 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

Contents

Executive summary... 4

Solution architecture and components ... 7

Solution design and configuration ... 12

Performance and scalability testing ... 20

Conclusion ... 47

References ... 48

Appendix ... 49

Executive summary

Database management systems (DBMSs) such as Microsoft SQL Server are used to support business-critical applications. To deliver fast response times across the range of applications, SQL Server databases require storage designed for both low-latency transactional I/O and high-throughput analytic workloads.

Virtualization supports greater consolidation of different types of database

workloads, enabling both online transaction processing (OLTP) and online analytical processing (OLAP) workloads to share the same servers and storage. The EMC® XtremIO® all-flash array is designed to perform in demanding virtualized

environments and effectively addresses the challenges associated with virtualizing I/O-intensive database workloads. XtremIO delivers very high and consistent performance for random I/O, consistent ultra-low latency, and the best cost-per-database economics. This applies equally to OLTP and OLAP workloads, and to consolidated mixed workloads on a common XtremIO storage array.

This solution provides a highly available and scalable architecture for consolidated SQL Server 2012 and 2014 workloads deployed in a VMware vSphere virtualized environment with XtremIO all-flash storage. The virtualized transactional and decision-support databases are consolidated on the same physical hosts and storage array. VMware vSphere High Availability (HA) provides failover protection against hardware and OS outages. Microsoft SQL Server AlwaysOn Availability Groups (AlwaysOn AG) provides database high availability and disaster recovery.

Data protection and availability are enhanced and simplified by using XtremIO Virtual Copy (XVC) technology to create instant, high performance copies of the databases. EMC AppSync® copy management software automates copy creation and enables near-instant data recovery. XVC also enables highly effective business intelligence (BI) reporting, realtime analytics, and test and development (DevOps) with no performance impact to the production volumes.

Through a series of use cases and test scenarios, the solution demonstrates how XtremIO enhances the capabilities of SQL Server database-dependent environments in terms of performance, data protection, data reduction, data-at-rest encryption (DARE), simplified deployment, and database repurposing.

The solution demonstrates:

• Achieve maximum array performance with XtremIO greatly simplified LUN provisioning, using standardized sizes for ease of deployment and

management, and without the need to specify disk groups, RAID configuration, SQL Server object placement, or free space schemes.

• Superior cost-to-performance ratio using XtremIO Virtual Copies of SQL Server instances for DevOps and QA, in comparison to traditional copy management methods.

Business case

Solution overview

Key results

4 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

• EMC AppSync automation and management of XtremIO Virtual Copies can significantly accelerate AlwaysOn AG secondary replica creation, in comparison to SQL Server native backup and restore. Virtual copies of production

databases can be created off the secondary replica for downstream operations such as development and testing.

• Outstanding transactional performance achieved with realistic OLTP

workloads—up to 300,000 IOPS in this configuration, while maintaining 1 ms to 2 ms host latencies. This greatly exceeds the industry best practices recommendation for storage subsystem response times.

• No performance impact when consolidating vastly different random OLTP (8K blocks) and sequential OLAP (64K to 128K blocks) workloads on the same XtremIO array.

• XtremIO arrays spread workload demand evenly across all storage controllers and disk drives for consistent performance and maximum disk lifetime. • SQL Server indirect checkpoints produce consistently higher TPS and generate

more write I/O activity than the automatic checkpoints for OLTP workloads. The XtremIO array delivered average sub-millisecond latency across all tests for both indirect and automatic checkpoints.

• SQL Server reached full cache utilization of 150 GB in less than 4 minutes due to the extremely low latency of the XtremIO all flash volumes.

• SQL Server Transparent Database Encryption (TDE) decreased OLTP

performance by 5 percent and OLAP performance by 30 percent in comparison to XtremIO Data at Rest Encryption (DARE). Implementing TDE also results in additional capacity utilization due to encryption of data prior to being written to the array.

• All configurations of OLTP, OLAP, and AlwaysOn AG copies benefitted from either XtremIO compression or XtremIO deduplication or both.

This white paper describes the architecture and key components of the solution and demonstrates the operational advantages of virtualized SQL Server databases deployed on XtremIO. The paper outlines how the solution simplifies configuration and, through a series of use cases, demonstrates how the solution enhances the capabilities and performance of SQL Server database environments.

The white paper is intended for SQL Server database administrators, VMware

administrators, storage administrators, IT architects, and technical managers who are responsible for designing, creating, and managing SQL Server databases,

infrastructure, and data centers. Document purpose

Table 1 provides definitions for some of the terms used in this white paper.

Table 1. Terminology Term Definition

Asynchronous commit mode

In AlwaysOn AG, an availability mode under which changes sent an availability replica do not have to be written to disk for transactions to complete on the primary database.

Availability database

In AlwaysOn AG, a database that belongs to an availability group. For each availability database, the availability group maintains a single read-write copy (primary database) and one to eight read-only copies (secondary databases).

Availability

group In AlwaysOn AG, a container for a set of databases (availability databases) that fail over together. Availability

replica

In AlwaysOn AG, an instance of an availability group that is hosted by a specific SQL Server and contains a copy of each availability database in the availability group.

BW An abbreviation for bandwidth used in diagrams in this white paper.

OLTP Online transaction processing. Transaction-oriented processes such as

data entry and retrieval transaction processing.

OLAP Online analytical processing. Analytics-oriented processes such as

reporting, self-service BI, and data mining. Synchronous

commit mode

In AlwaysOn AG, an availability mode under which changes sent to an availability replica must be written to disk for transactions to complete on the primary database.

VMDK Virtual Machine Disk. A file format for virtual disks to be used in virtual

machines.

XVC XtremIO Virtual Copy (formerly XtremIO snapshot). In-memory, writeable

replica. XVC technology abstracts copy operations as unique in-memory metadata operations with no impact on any back-end resources.

EMC and the authors of this document welcome your feedback on the solution and the solution documentation. Contact EMC.Solution.Feedback@emc.com with your comments.

Authors: Anthony O’Grady, Eyal Sharon, Judith Cuppage. Terminology

We value your feedback!

6 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

Solution architecture and components

This solution provides an optimal cost-to-performance ratio for SQL Server mission-critical application environments. The SQL Server 2012 and 2014 databases are deployed on virtualized SQL Server instances on an XtremIO storage array. The

virtualized DevOps SQL Server instances in the environment access XtremIO copies of the production database for testing and development purposes. XtremIO integrates with AppSync to provide database-aware copy management.

The solution includes the following key technology components: • EMC XtremIO all-flash array

• EMC Virtual Storage Integrator (VSI)

• VMware vSphere and VMware vCenter Server 6.0 • Microsoft SQL Server

• EMC AppSync copy management software EMC XtremIO

The EMC XtremIO storage array is an all-flash system that uses flash storage to deliver value across the following main dimensions:

• Scalability—A single X-Brick® is the building block of the XtremIO scale-out architecture. You can cluster up to eight X-Bricks together to provide increased performance and capacity, as shown in Figure 1.

Figure 1. XtremIO all-flash array family

The XtremIO array’s multi-controller, n-way active architecture means that performance scales linearly with capacity. Two X-Bricks supply twice the IOPS of the single X-Brick configuration, four X-Bricks supply four times the IOPS of the single X-Brick configuration, and so on. Latency remains consistently low as the system scales out.

Key solution components

• Performance—Regardless of how busy the system is, and regardless of storage capacity utilization, latency and throughput remain consistent, predictable, and constant. Latency within the array for an I/O request is typically much less than one millisecond (ms).

• Efficiency—XtremIO supports high-performance and space-efficient copies, inline data reduction (including inline deduplication and data compression), thin provisioning, DARE, and full integration with VMware vSphere Storage APIs for Array Integration (VAAI) with support for FC and iSCSI protocols.

• Simplicity—Provisioning storage with XtremIO is as simple as deciding the size of the LUN or LUNs that you want to create and then mapping the LUNs to a host. There is no need to select the RAID type, create a RAID group, or decide whether to enable thin provisioning, deduplication, or any other data service. These functions are built into XtremIO.

• Data protection—XtremIO uses a proprietary flash-optimized data protection algorithm, XtremIO Data Protection (XDP), which protects data while enabling performance that is superior to any existing RAID algorithms. Optimizations in XDP also result in fewer writes to flash media for data protection purposes. For more information about XtremIO, visit www.emc.com/storage/xtremio/. EMC Virtual Storage Integrator

EMC Virtual Storage Integrator (VSI) is a plugin for VMware vCenter Server that integrates EMC storage management with vCenter Server. VSI provides VMware administrators with full visibility into EMC storage directly from vCenter, and enables server administrators to easily handle storage-related tasks using the familiar vCenter Server interface. In this solution, we used VSI to streamline and simplify the task of configuring best practice settings.

For more information about VSI, refer to the EMC VSI for VMware vSphere Web Client

Product Guide.

VMware vSphere

This solution demonstrates how XtremIO offers efficient enterprise storage with VMware vSphere cloud infrastructures. vSphere provides complete and robust virtualization, enabling SQL Server instances to be virtualized on fully functional virtual machines that run isolated and encapsulated operating systems and applications. In this solution, we configured the ESXi servers as a vSphere High Availability (HA) cluster to ensure failover protection against hardware and operating system outages within the virtualized environment.

Microsoft SQL Server

The SQL Server DBMS is capable of handling enterprise-level workloads. This solution uses the OLAP data warehousing (DW), BI, and operational OLTP features of the product.

SQL Server also provides several high-availability options for databases. This solution uses AlwaysOn Failover Cluster Instances (FCI) and AlwaysOn AG.

8 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

EMC AppSync

AppSync offers simple, SLA-driven, self-service application protection with tiered protection options and proven recoverability. AppSync support for XtremIO enables orchestration and automation of the creation, mounting, and recovery of multiple SQL Server database-consistent copies. Service plans that match the specific SLA

requirements of individual databases control these operations. AppSync supports the following features for SQL Server:

• AlwaysOn AG

• Dynamic discovery of user databases during a service plan run

• SQL Server databases on physical hosts, raw device mapping (RDM) in physical compatibility mode, and virtual disks on virtual hosts

Note: AppSync does not support RDM disks in virtual mode.

• Data protection of stand-alone and clustered production SQL Server instances • Mounting to any non-clustered instance of SQL Server (physical or virtual) and

mounting as files to any Windows host

• Mounting with recovery, no recovery, or standby on non-clustered instances For more information about AppSync, visit

www.emc.com/storage/data-replication/appsync.htm.

As shown in Figure 2, the solution architecture includes the following:

• Storage layer—Two XtremIO X-Bricks clustered as a single logical storage system.

• SQL Server database layer

Two clustered instances (Instance1 and Instance2), and a single standalone instance (Instance3), of SQL Server 2014, with 10 OLTP databases per instance.

A SQL Server AlwaysOn AG instance (Instance 2, Instance4, and Instance5) configured using XVC copies.

One clustered instance (Instance6) of SQL Server 2012 with a 4 TB OLAP database.

XVC copies of the SQL Server databases for backup and repurposing, with both first-generation master copies and second-generation copies used for DevOps. The copies can be mounted to any of the mount hosts at any time, as necessary.

• Application Layer –The application workload tools consist of separate OLTP and OLAP clients. vCenter Server and the EMC Virtual Storage Integrator plug-in provide central management and simple provisioning. AppSync provides database-aware copy management. The solution has a dependency on Microsoft Active Directory for enforcing security and authenticating and authorizing users.

Solution architecture

• Network layer—108 GB/s of active bandwidth with SAN switches that support virtualized data centers and enterprise clouds.

• Physical servers and virtualization layer—Four rack-mounted servers that enable a high-performing, consolidated, virtualized SQL Server infrastructure for deployment flexibility without the need to modify the application. The ESXi servers are configured as a VMware vSphere High Availability (HA) cluster.

Figure 2. Logical architecture of the solution

10 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

Table 2 lists the hardware resources used in the solution.

Table 2. Hardware resources

Hardware Quantity Configuration

Storage array 1 EMC XtremIO with two clustered X-Bricks with a total of

30.4 TB of usable physical capacity.

Servers 4 40 Intel E7 2.9 GHz processor cores (80 logical) with:

• 512 GB of RAM

• 2 x 1 Gb quad Ethernet (GbE) NIC

• 2 x 10 GbE NICs

• 2 x 8 GB FC dual-port HBAs

LAN switches 2 10 GbE, 32-port non-blocking LAN switch.

SAN switches 2 EMC Connectrix® DS-6510B enterprise-class SAN switch.

Table 3 lists the software resources used in this solution.

Table 3. Software resources

Software Version Notes

XtremIO 4.0.1 All-flash storage.

VMware vSphere 6.0 Hypervisor that hosts:

• Four enterprise-class production virtual

machines

• Three clustered and one standalone SQL

Server virtual machines

Each virtual machine is configured with 40 vCPUs and 150 GB of RAM.

VMware vCenter Server 6.0 vSphere management.

Microsoft Windows 2012 R2 SP1 OS for database servers.

Microsoft SQL Server 2012 Enterprise

Edition SP2 Database software.

Microsoft SQL Server 2014 Enterprise

Edition SP1 Database software.

EMC AppSync 2.2.2.0 SQL Server SLA-driven copy management

tool, which is integrated with the Volume Shadow Copy Service (VSS).

EMC PowerPath/VE 6.0 EMC storage multipath management.

EMC Virtual Storage

Integrator 6.6 VSI provides the ability to view, provision, and manage EMC block and file storage in a Windows environment.

Industry-standard OLTP and OLAP workload toolkits

n/a These toolkits simulate OLTP and OLAP

workloads respectively. Hardware

resources

Solution design and configuration

With XtremIO, the performance of your SQL Server workloads is always consistent and predictable, regardless of LUN size, access patterns (sequential or random), and locality of reference—database administrators (DBAs) do not need to worry about hot spots on the array.

Because storage configuration is set at the factory, you do not need to design or configure disk groups or RAID types. File separation is also unnecessary. Because of the consistent performance of XtremIO across all workloads, disruptive tempdb workloads can co-exist in the same LUN with their write-intensive transaction logs and still provide excellent performance. In addition, with built-in thin provisioning, storage is allocated only when it is needed. This allows DBAs to create larger LUNs to accommodate future or unexpected database growth without wasting any physical space on storage.

Metadata operations, such as inline data reduction, thin provisioning allocations, and internal array copy operations, are conducted entirely in memory without affecting I/O operations.

Uncertainty in capacity planning models requires that you plan for unpredictable data growth to meet changing business needs. Ensuring free space for growth at all levels of the storage stack (from data file to LUN) and not locking out valuable storage capacity ahead of time is vital.

EMC and SQL Server traditional best practices recommend that you configure SQL Server data file sizes to be 10 percent to 20 percent larger than the current or intended database size. This configuration requires free space at the NTFS volume level and results in the underlying storage space being locked out until the space is needed. A maintenance window and manual intervention is required if the NTFS volume needs expansion.

It is difficult to balance how much free disk space to allocate for the database at the design stage—free space that will not have an immediate use—against the amount of free space that needs to be readily available for near future growth. The cost and management complexity of free space is compounded multiple times in

environments with many SQL Server instances and large numbers of database and log files.

Figure 3 shows an example of a 1 TB database, which requires at least 1.58 TB of allocated storage space to adhere to traditional storage design best practices. This represents about a 58 percent waste of physical storage allocation. Figure 3 also shows how, on XtremIO storage, the database can easily use significantly less physical storage allocation and still satisfy the logical free space requirement for storage planning. The example shows an overall 2:1 data reduction rate on XtremIO for a typical OLTP or OLAP database environment.

XtremIO storage design overview

Database storage design

considerations

12 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

Figure 3. XtremIO storage capacity planning

XtremIO uses thin provisioning, deduplication, and compression to achieve this reduction. XtremIO thin provisioning prevents large volume allocations from wasting physical storage in advance of need, while still providing room for growth when required. Thin provisioning also eliminates operational complexities—DBAs can allocate, from the start, as much LUN space, virtual file system space and, therefore, NTFS volume space as required.

For this solution, we deployed XtremIO as a cluster of two X-Bricks (20 TB each) with an available physical capacity of 30.5 TB, as shown in Figure 4.

Figure 4. XtremIO Management Application storage panel

XtremIO processes both random and sequential I/O generated from a database in an equally balanced way across the array. This simplifies the storage design for SQL Server databases compared to traditional provisioning techniques.

Solution storage design

For the solution, we standardized the volume size for ease of deployment, as shown in Table 4.

Table 4. Database volume template

Volume Volume size Volume type OLTP databases

OS 120 GB VMDK

SQL Server installation and systems databases

120 GB VMDK

SQL Server data and log 2 TB Raw device mapping (RDM) or VMDK

Tempdb 2 TB RDM or VMDK

OLAP databases

OS 120 GB VMDK

SQL Server installation and

systems databases 120 GB VMDK

SQL Server data 2 TB RDM or VMDK

SQL Server log 500 GB RDM or VMDK

Tempdb 2 TB RDM or VMDK

Note: The performance and availability of RDM and VMDK volumes are similar, so either

choice is reasonable depending on individual design requirements. Certain technologies, such as Windows Server Failover Clustering (WSFC), require RDMs when running in virtual machine clustering (to support SCSI-3 reservations).

We created the following virtualized SQL Server instances and databases on a vSphere HA cluster:

• Five SQL Server 2014 production instances with transactional (OLTP) databases • One SQL Server 2012 production instance with an analytical (OLAP) database As shown in Table 4, we used different database volumes to store the relevant database files. In general, a single database LUN is sufficient for most OLTP

databases that require high disk performance. OLAP databases might require up to eight LUNs to provide more disk queues for data files, and a single LUN for the log file.

Note: With XtremIO, putting all database files for a single SQL Server database into one LUN

easily provides over 20,000 IOPS for OLTP workloads, with sub-millisecond performance. For OLAP databases, evenly distribute the data files across eight XtremIO data volumes. Solution database

design

14 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

Database profiles

Table 5 details the OLTP and OLAP database profiles for the solution.

Table 5. OLTP and OLAP database profiles

Item Details

Database size 250 GB

SQL Server databases 30 x 250 GB OLTP

20 x 250 GB OLTP mount 1 x 4 TB OLAP

1 x 4 TB OLAP mount

Memory for SQL Server 150 GB to 320 GB

Workload profiles OLTP workload simulated by an industry-standard OLTP

workload toolkit

OLTP read/write ratio of 90/10, 70/30, 60/40

OLAP workload simulated by an industry-standard OLAP workload toolkit

OLAP 100% reads

Average data block sizes OLTP reads: mainly 8K index seeks

OLTP log writes: 4K to 60K (mainly ~4K) OLTP Background writer: 8K or 32K (mainly 8K) OLAP reads: 64K or 128K

Database file layout OLTP: 8 data files, 1 log file

OLTP tempdb: 8 data files, 1 log file OLAP: 64 data files, 1 log file

OLAP tempdb: 32 data files, 1 log file

Checkpoint configuration OLTP: Indirect checkpoint, 60-second recovery interval

OLAP: Default configuration (Automatic checkpoint) max degree of parallelism

(MAXDOP) configuration OLTP: MAXDOP 1 OLAP: various MAXDOP settings Database LUN design

Table 6, Table 7, and Table 8 provide details of the database LUN design for the solution.

Table 6. LUN design details for the OLTP databases

Item Production OLTP AlwaysOn AG mounts OLTP Instance 1 Instance 2 Instance 3 Instance 4 Instance 5

Database name

DB_10 to DB_19

Tempdb DB_20 to DB_29

Tempdb DB_30 to DB_39

Tempdb DB_30s to DB_39s

Tempdb DB_30ss to DB_39ss

Tempdb Database and

log file size

250 GB 250 GB 250 GB 250 GB 250 GB 250 GB 250 GB 250 GB 250 GB 250 GB LUN size 10 x 2 TB 1 x 2 TB 10 x 2 TB 1 x 2 TB 10 x 2 TB 1 x 2 TB 10 x 2 TB 1 x 2 TB 10 x 2 TB 1 x 2 TB

Table 7. LUN design details for the OLAP database Item Production OLAP

Instance 6

Database name DB_DW tempdb

Database and log file size 4 TB 1 TB

LUN size 8 x 2 TB 4 x 2 TB

Log LUN size 1 x 2 TB 1 x 2 TB Table 8. Total sizes for OLTP and OLAP databases

Database Total data and log size Total LUN size

Production 11.5 TB (excluding tempdb)

13.25 TB (including tempdb)

78 TB (excluding tempdb) 93 TB (including tempdb) AlwaysOn AG

mounts 5 TB (excluding tempdb) 5.5 TB (including tempdb) 42 TB (excluding tempdb) 44 TB including tempdb)

Notes:

• This database LUN design is based on our test workload. In a production environment,

database size, especially log file and tempdb size, can vary depending on the types of transactions and queries that are running on the databases.

• AppSync copies can be mounted on a SQL Server mount host and enabled for clients to

connect. The suffix s in the database name indicates a first-generation copy1. For example, DB_30s is a first-generation copy of database DB_30 that is mounted on the mount host. The suffix ss in the database name indicates a second-generation copy. For example, DB_30ss is a second-generation copy of database DB_30 taken from DB_30s and mounted on the mount host.

The VSI plug-in enables VMware administrators to easily set ESXi host parameters to the recommended settings for XtremIO. VSI presents the recommended settings through a GUI wizard, as shown in Figure 5. This greatly simplifies and accelerates the provisioning process. As a best practice, we used the recommended settings when configuring the ESXi hosts for the solution.

1 A first-generation copy is a copy that is taken from a database. A second-generation copy is a copy that is taken from a first-generation copy.

Best practice settings for ESXi hosts on XtremIO

16 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

White Paper

Figure 5. Configuring ESXi host recommended settings for XtremIO with VSI

For more information on using VSI, refer to the EMC VSI for VMware vSphere Web

Client Product Guide.

For the solution, we used AppSync to create and manage database-consistent XVC copies of the SQL Server databases. We validated AppSync with AlwaysOn AG by seeding, restoring, and repurposing an availability group. This section outlines the AppSync options involved in these tasks.

For more information on using AppSync to create and manage copies of SQL Server databases, refer to the EMC AppSync User and Administration Guide.

Seeding an AlwaysOn AG availability group

The AlwaysOn AG feature supports a failover environment for a discrete set of user databases (availability databases) that fail over as a group. An availability group supports a set of primary databases and up to four (SQL Server 2012) or eight (SQL Server 2014) sets of corresponding secondary databases.

To add databases to an availability group, you must restore backups of the primary databases to the server instance that hosts the secondary replica. This is a time consuming process that requires multiple steps for multiple databases. With AppSync and XtremIO, however, the process is consolidated into a simple streamlined operation.

AppSync copy management

To validate database seeding, we protected 10 availability databases as a group on the primary instance, during a maintenance window. We then restored all the databases in a single operation by using the Mount Copy of SQL Server wizard in AppSync, as follows:

1. Select the first database copy in the availability group and select Mount. 2. In the Mount Additional Copies window, select the other nine databases, as

shown in Figure 6.

3. Follow the standard AppSync procedures to mount and recover the databases.

Figure 6. Restoring 10 databases for an availability replica in a single operation

Restoring a SQL Server OLAP database after a failed maintenance operation

Subscribing a database to an AppSync service plan indicates that you want to protect the database by scheduling reoccurring copy creation. As the service plan runs, copies of the database are created and stored at the required intervals, facilitating point-in-time recovery.

To validate database recovery, we simulated a failed maintenance operation on a 4 TB OLAP database by dropping a table. We then used AppSync to select the most recent copy from the service plan and quickly restored the database to the state that it was in before the table deletion.

Making multiple repurposed copies

An availability replica is held on an instance of SQL Server and contains a copy of all availability databases in an availability group. Availability replicas support two availability modes: asynchronous commit mode and synchronous commit mode. The asynchronous commit mode enables agile, near realtime copies of the database to be taken at any time without affecting production performance. To validate

AppSync with this mode, we used an asynchronous replica as a source for creating multiple repurposed copies of the databases, making first- or second-generation copies of three to five databases at one time.

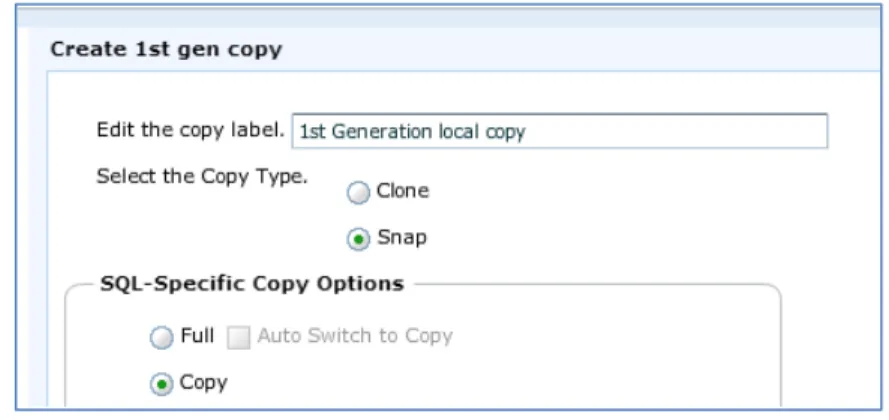

You must use the SQL Server copy-only option when backing up from an availability replica. In AppSync, select the Snap and Copy options when creating the copy, as shown in Figure 7.

18 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

Performance and scalability testing

The performance and scalability tests we performed for this solution highlight how XtremIO easily services vastly different and competing enterprise workloads while all elements, including storage, stay within the green zone, which is a state of utilization and latency that is healthy and sustainable for production workloads.

For the tests, we:

• Generated OLTP workloads using an industry-standard OLTP workload toolkit application that produces realworld transaction-oriented workloads

• Generated OLAP workloads using an industry-standard OLAP workload toolkit application that produces realworld data analytics workloads

• Ran the workloads on a dual-brick XtremIO system that we configured according to best practices

• Collected system I/O performance metrics, including IOPS, transactions per second (TPS), and latency, at the server (database) and storage levels Figure 8 summarizes the performance profile for all the performance tests.

Figure 8. XtremIO performance profile for all performance tests

Overview

20 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

Notes on performance results

• Test results are highly dependent on workload, specific application requirements, and

system design and implementation. Relative system performance will vary because of these and other factors. Therefore, you should not use the solution workloads as a substitute for a specific customer application benchmark for critical capacity-planning and product-evaluation decisions.

• All performance data contained in this white paper were obtained in a rigorously

controlled environment. Results obtained in other operating environments might vary significantly. EMC does not warrant or represent that a user can or will achieve similar performance.

Test objectives

The overall test objectives were to demonstrate: • Performance and scale

In a series of tests, we measured SQL Server 2014 overall performance and scalability when servicing common OLTP workloads with varying I/O profiles. • Baseline OLTP workload

We created a baseline OLTP workload to simulate a typical production

environment and demonstrate that this environment delivers performance and scalability for OLTP workloads. We used this workload as the basis for the subsequent tests.

• SQL Server mixed workloads sustainability

XtremIO can deliver high throughput and minimal latency for OLTP and OLAP mixed workloads without the need for workload separation or Quality of Service (QoS).

• Data reduction

One of the most impressive capabilities of XtremIO storage is its inline data reduction features. We addressed several aspects of data reduction as it applies to SQL Server environments.

• Data-at-rest encryption

Business requirements to strengthen security are becoming more and more common. To address these requirements, XtremIO version 4 enables DARE by default. We compared XtremIO DARE to the native SQL Server Transparent Data Encryption (TDE) technology for capacity requirements and performance. • Support for SQL Server AlwaysOn FCI and AlwaysOn AG on vSphere 6.0

We validated XtremIO support for environments that require the protection of SQL Server AlwaysOn FCI and AlwaysOn AG.

• Data repurposing with XVC and AppSync

We used AppSync to create multiple copies of the production databases and measured the performance impact of AppSync operations. We also measured the effect of multiple copies on the overall data reduction rate.

Test scenarios

We tested the following use cases:

• OLTP linear performance and scalability analysis, including:

SQL Server checkpoint impact on variable OLTP workloads

• Baseline SQL Server OLTP workload

• Mixed OLTP and OLAP workload performance analysis, including:

Performance effect of OLAP query spill operations

• DevOps copy management performance analysis • XtremIO DARE and SQL Server TDE comparison • Data reduction analysis, including:

SQL Server AlwaysOn AG and AppSync copy management

• XtremIO data reduction with SQL Server row, page, and columnstore compression

The objective of this test was to measure SQL Server 2014 overall performance and scalability while servicing common OLTP workloads with varying I/O profiles. The test simulated a wide spectrum of high-volume, online transactional workloads that are seen in financial services systems, online gaming solutions, e-commerce solutions, and so on. The main metrics we measured were read and write I/O latencies,

aggregated throughput in IOPS, and SQL Server TPS. The test also demonstrated how an XtremIO system can accommodate growing database workloads and continue to provide stable performance.

In addition, we examined the effect of the SQL Server checkpoint on OLTP workloads, with variable read/write ratios, executed against one of the test databases. This test is described separately at the end of the section.

Test methodology

We used the OLTP workload toolkit to generate an OLTP workload to drive high physical random I/O to the databases.

We configured the toolkit so each database had a fixed number of concurrent users. All the databases ran the same set of OLTP queries. Controlling the number of concurrent users and databases ensured that we generated a specific level of IOPS. Test procedure

1. We ran an OLTP workload for the first database and recorded the system performance after the workload stabilized.

2. We added a workload to four additional databases while the previous workload was still running and recorded system performance after the workload stabilized.

3. We continued adding workloads and recording system performance in increments of five databases until all databases were running with a stabilized workload.

OLTP linear performance and scalability analysis

22 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

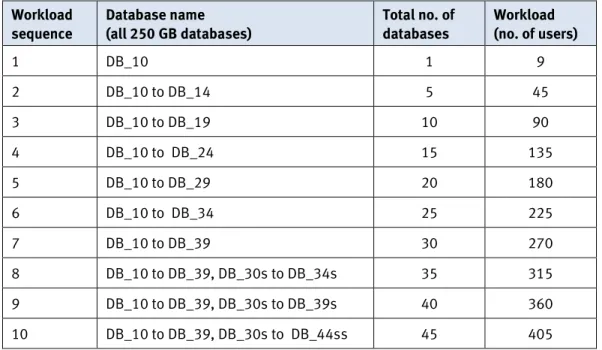

Table 9 shows the test load sequence; all workloads had a read/write ratio of 90:10. For details about the database profile and configuration, refer to Table 5 on page15.

Table 9. Test workload sequence Workload

sequence

Database name (all 250 GB databases)

Total no. of databases

Workload (no. of users)

1 DB_10 1 9

2 DB_10 to DB_14 5 45

3 DB_10 to DB_19 10 90

4 DB_10 to DB_24 15 135

5 DB_10 to DB_29 20 180

6 DB_10 to DB_34 25 225

7 DB_10 to DB_39 30 270

8 DB_10 to DB_39, DB_30s to DB_34s 35 315

9 DB_10 to DB_39, DB_30s to DB_39s 40 360

10 DB_10 to DB_39, DB_30s to DB_44ss 45 405

Test results

Overall, the average latency remained low for the XtremIO array, while the added database workloads generated more I/O. The entire system generated over 90,000 TPS, with an average of 300,000 IOPS when all database workloads were added and stabilized, as shown in Figure 9. The XtremIO array latency remained at approximately 1 ms and the host’s average disk latency ranged from less than 1 ms to 2 ms.

XtremIO system performance

XtremIO provided extremely high IOPS and throughput with very low latency and a high overall SQL Server transaction rate, as shown in Figure 10.

Figure 10. XtremIO performance with full OLTP workload XtremIO SSD and XDP linear scaling

XtremIO N-way, active/active, scale-out architecture linearly scales capacity and performance, creates extremely high IOPS or throughput, and maintains extremely low latency.

The XtremIO Management Server (XMS) GUI reporting feature facilitates the capture of realtime performance data. We set up Resource, IOPS, and Latency reports and observed them while the OLTP workload was running. As shown in the Figure 11, regardless of the number or source of the database workload IOPS , XtremIO balances I/O processing evenly across storage processors and SSDs .

1

2 3

24 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

Figure 11. XtremIO SSD and XDP linear scaling

SQL Server checkpoint impact on variable OLTP workloads

The objective of this test was to measure the impact of the SQL Server database checkpoint on variable OLTP workloads.

Transactional modifications to data pages are performed in memory and periodically written to disk. A data page might be modified multiple times before it is written to disk. A combination of the workload profile and the checkpoint type used determines the frequency and intensity of checkpoint write IOPS:

• Automatic checkpoint—Executes less frequently than other checkpoint types, but under a write-heavy workload might cause an I/O spike and affect

transaction processing. This is the default checkpoint, and is used for OLAP workloads in this solution (see Table 5 on page 15).

• Indirect checkpoint—Can offer a more consistent I/O pattern, but can also increase the total percentage of write IOPS. This is the checkpoint type used for OLTP workloads in this solution (see Table 5 on page 15).

For this test scenario, we tested both checkpoint types on a single OLTP database— DB_20 (250 GB)—while varying the read/write ratio of the workload. As shown in Figure 12, we completed three tests, increasing the percentage of write I/Os for the second and third tests.

For all three tests, the indirect checkpoint delivered consistent latency and higher TPS than the automatic checkpoint, but with a greater percentage of write I/O. The XtremIO array delivered sub-millisecond latency across all tests.

Figure 12. OLTP database performance with automatic and indirect checkpoints

The objective of this test was to demonstrate that performance and scalability for typical OLTP workloads on XtremIO can be achieved using a highly simplified storage layout while still delivering a highly responsive solution. The layout uses the

minimum number of required LUNs—that is, one LUN for each database. Database data and log files can be consolidated on the same LUN to simplify design. Table 6 on page 15 outlines the storage layout.

Note: The OLTP workload used in this test served as a baseline for the remaining tests

documented in this paper. Test methodology

We used the OLTP workload toolkit to generate an OLTP workload to drive physical random I/O to the databases.

We configured the toolkit so each database had a fixed number of concurrent users. All the databases ran the same set of OLTP queries. Controlling the number of concurrent users and databases ensured that we generated a specific level of IOPS. Baseline SQL

Server OLTP workload

26 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

Test procedure

We ran an OLTP workload for 30 production databases and recorded the system performance for one hour after the workload stabilized.

Test results

With a simplified storage layout, the XtremIO array can service all the OLTP workloads while maintaining average sub-millisecond latency for all databases. As shown in Figure 13, the average latency variance between all databases was just

60 microseconds.

Figure 13. I/O profile of baseline OLTP workload

Figure 14 charts the execution count, the logical reads and writes, and the physical reads, for all procedures executed against one of the production databases in the one-hour period. The data was recorded by and analyzed from the SQL Server sys.dm_exec_procedure_stats dynamic management view (DMV).

Figure 14. OLTP production database page reads and writes: logical versus physical

Note: To process an OLTP workload, SQL Server reads and writes data pages. A logical read

refers to an SQL Server read of a data page. A physical read refers to retrieval of the data page from disk because the page is not in the buffer pool (an area of memory reserved for SQL Server data pages). A logical write refers to an SQL modification to a data page. On NUMA hardware, modifications to data pages are eventually written to disk by the lazy writer during a checkpoint.

The SQL Server OLTP database workload used for this solution produced an I/O pattern of predominantly 8K reads for seeks and 8K lazy writer commits. However, SQL Server transaction performance is a function of read latency for scans, seeks, and lookups, write latency for lazy writer commits, and writes to the transaction log. Table 10 shows the Access Methods, Buffer Manager, and Databases activity for the test workload. This activity can produce a varied I/O pattern and block size.

Table 10. Access methods and Buffer Manager activity for OLTP workload Counter name Value

SQL Server: Access Methods

Full Scans/sec 70.554

Index Searches/sec 2,301,413.787

Range Scans/sec 11,282.115

Workfiles Created/sec 3.167

Worktables Created/sec 1.192

28 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

Counter name Value SQL Server: Buffer Manager

Background writer pages/sec 152.762

Lazy writes/sec 1,500.088

Page lookups/sec 4,842,695.642

Page Reads/sec 23,694.463

Page Writes/sec 1,652.856

Readahead pages /sec 3,754.080

SQL Server: Databases

Log Bytes Flushed/sec 1,098,465.893

Log Flushes/sec 181.955

To maximise SQL Server transaction processing, a well-designed I/O subsystem needs to perform well with this varied I/O pattern and block size. With XtremIO, no additional design considerations are required to satisfy this requirement. For the test workload, the array responded to all request sizes with sub-millisecond latency.

SQL Server start-up performance

The test OLTP workload had an I/O pattern of primarily 8K reads for page lookups. One exception to this pattern occurs during buffer cache ramp-up2, when SQL Server transforms 8K requests into aligned 64K requests.

In the test environment, the 10 OLTP databases on each OLTP instance share a 150 GB buffer cache. We analyzed the performance data to determine the ramp-up time for the SQL Server buffer cache on XtremIO. Figure 15 shows that it took just 3 mins and 38 secs for SQL Server to reach its memory target. After this initial period, the database TPS permanently stabilizes.

2 Buffer cache ramp-up refers to the time it takes to fill the buffer cache up to its memory target after SQL Server start-up or a restart.

Figure 15. SQL Server ramp-up time on XtremIO

Figure 16 shows activity in MB/s and demonstrates that the XtremIO array responds to both the ramp-up 64K requests and the subsequent 8K requests with

sub-millisecond latency.

Figure 16. XtremIO response to 64K ramp-up and 8K OLTP workload processing requests

30 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

To guarantee predictable performance for consolidated workloads, administrators typically separate the IT resources allocated to competing workloads like OLTP and OLAP. They might also implement QoS mechanisms to maintain the agreed SLAs for each workload. The objective of this test was to demonstrate that, with XtremIO, multiple competing and completely different workloads can run simultaneously on the same array, leveraging the same pool of storage devices, yet provide high throughput with minimal latencies.

We performed an additional test to review the performance effect of OLAP query spill operations. The array responded to simultaneous tempdb read and write requests with an average response time of less than 700 microseconds. See Appendix > SQL Server tempdb and OLAP queries for details.

Test methodology

We used the OLTP workload toolkit to generate an OLTP workload against our 30 production databases. The workload pattern was identical to that used in the baseline OLTP test (see Baseline SQL Server OLTP workload).

We used the OLAP workload toolkit to generate an OLAP workload against the SQL Server 2012 4 TB Data Warehouse (DW) database.

We initially configured the OLAP database as a rowstore without compression. During the test procedures, we implemented in-memory columnstore indexing compression. Test procedure

1. As an OLTP-only baseline, we used the production workload performance metrics obtained in the baseline OLTP test (see Baseline SQL Server OLTP workload).

2. To gather baseline performance for the rowstore OLAP-only workload, we stopped the production workload and ran the OLAP workload.

3. With the production OLTP workload running again, we introduced the

rowstore OLAP workload and gathered performance metrics. We analyzed the data obtained to ensure that baseline OLTP production performance was still achievable.

4. We added columnstore indexing to the OLAP DW database.

5. To gather baseline performance for the columnstore OLAP-only workload, we stopped the production workload and ran the OLAP workload.

6. With the production OLTP workload running again, we introduced the

columnstore OLAP workload and gathered performance metrics. We analyzed the data obtained to ensure baseline OLTP production performance was still achievable.

Test results

Overall, the impact of introducing OLAP workloads into an XtremIO array with an existing production OLTP workload was less than 2 percent. High bandwidth OLAP scans on the same array had a minimal impact on OLTP latencies and throughput. For Mixed OLTP and

OLAP workload performance analysis

all workload tests, the XtremIO array continued to maintain an overall average latency of less than 1 ms.

Host performance results: OLTP workloads

As shown in Figure 17, when expressed in host-side IOPS and TPS, the impact on the production OLTP workload was less than 2 percent when either the rowstore or the columnstore OLAP workloads were introduced on the same XtremIO array.

Figure 17. Sustaining OLTP workload performance with mixed OLAP workloads Host performance results: OLAP query processing

OLAP consists of a mixture of long running queries that interrogate large DW data sets and shorter running queries that scan ranges of data. The queries are usually

characterized by how long they take to run.

We took performance data collected from the SQL Server sys.dm_exec_query_stats DMV and aggregated the workload reads for both the rowstore and columnstore tests. As shown in Table 11, introducing columnstore indexing reduced the overall volume of both the physical and logical processing required to satisfy the same set of OLAP DW queries.

Table 11. Aggregated workload processing for both rowstore and columnstore tests

Workload Type Logical Reads Physical Reads OLAP Columnstore 24,383,263 12,216,239 OLAP Columnstore + OLTP 24,383,248 12,221,930 OLAP ROWSTORE 336,295,252 336,294,989 OLAP ROWSTORE + OLTP 336,295,252 336,294,989

32 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

We compared the query processing times for the baseline OLAP-only workloads (rowstore and columnstore) against the processing times when the OLAP workloads was introduced to the XtremIO array where the production OLTP databases were running. For both the rowstore and columnstore workloads, the total query

processing time was less than 2 percent more when the workloads were introduced on the same array as the OLTP databases, as shown in Figure 18.

Figure 18. OLAP batch processing time for rowstore versus columnstore workloads

While columnstore indexing can significantly improve the processing time of large volume DW queries, it does so at an increased CPU cost. For the test OLAP workloads, the CPU requirement almost doubled for the columnstore queries, as shown in Figure 19.

XtremIO array performance results: bandwidth

SQL Server OLTP IOPS are predominantly random lookups of 8K block size. OLAP workloads are larger sequential scans of mainly of 64K and 128K block size. As shown in Figure 20, when we introduced the OLAP mixed workload to the same XtremIO array as the OLTP workload, the array continued to service the OLTP 8K blocks in a near linear fashion.

Figure 20. XtremIO bandwidth for OLTP and OLAP block sizes

34 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

XtremIO array performance results: latency

SQL Server OLTP workloads are highly dependent on I/O service times. As shown in Figure 21, the OLTP volumes continued to service the databases with sub-millisecond response times during both rowstore and columnstore mixed workload tests.

Figure 21. XtremIO volume latency for OLTP and OLAP volumes XtremIO array performance results: storage processors

The XtremIO array responds to mixed workloads by load balancing the I/O processing across the array regardless of the source or type of workload. As shown in Figure 22, the array balances both OLTP and OLAP workloads uniformly across all storage processors.

Figure 22. XtremIO Xenv storage processor performance for mixed workloads

Software development groups have a constant requirement for agile creation and management of multiple software builds. XtremIO offers an extremely fast and efficient way to create and serve copies to support that demand.

Test methodology

We used the OLTP workload toolkit to generate an OLTP workload against our 30 production databases and 10 DevOps databases. The production workload pattern was identical to that used in the baseline OLTP test (see Baseline SQL Server OLTP workload).

We used the OLAP workload toolkit to generate an OLAP workload against the production and DevOps DW databases.

Test procedure

1. With the production OLTP workload running (see Baseline SQL Server OLTP workload) with the additional load of an AlwaysOn AG replica, we introduced the OLTP DevOps workload and gathered performance metrics. We analyzed the data obtained to ensure that array performance was sustained.

2. With the production, AlwaysOn AG and DevOps OLTP workloads running, we introduced the OLAP production workload and gathered performance metrics. We analyzed the data obtained to ensure that array performance was

sustained. DevOps copy

management performance analysis

36 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

3. With the production, AlwaysOn AG, DevOps OLTP, and production OLAP workloads running, we introduced the OLAP DevOps workload and gathered performance metrics. We analyzed the data obtained to ensure that array performance was sustained.

Test results

As shown in Figure 8, the XtremIO array that was running the production workloads continued to maintain near sub-millisecond average latency when we introduced the DevOps workloads. Table 12 shows the workload performance breakdown for each step in the test procedure.

Table 12. Workload performance breakdown for DevOps performance tests

Workload Average bandwidth (MB/s) Average IOPS XtremIO average latency (ms) Production / DevOps (1)

Production

30 x 250 GB OLTP databases

10 x 250 GB OLTP AlwaysOn AG databases

1,297 163,250

794

DevOps

10 x 250 GB OLTP databases 376 46,453

Production / DevOps (2) Production

30 x 250 GB OLTP databases

10 x 250 GB OLTP AlwaysOn AG databases

1,214 155,084

1,011

DevOps

10 x 250 GB OLTP databases 308 39,044

Production

1 x 4 TB OLAP DW database 805 11,358

Production / DevOps (3) Production

30 x 250 GB OLTP databases

10 x 250 GB OLTP AlwaysOn AG databases

1,223 156,270

1,097

DevOps

10 x 250 GB OLTP databases 297 37,560

Production

1 x 4 TB OLAP DW database 876 12,370

DevOps

1 x 4 TB OLAP DW database 181 2,559

This test demonstrates that production and DevOps OLTP and OLAP workloads can run simultaneously on the same XtremIO array.

Data-at-rest encryption (DARE) provides a solution for securing critical data even when the media is removed from the array. XtremIO arrays use a high performance, inline encryption technique to implement DARE and ensure that all data stored on the array is unusable if the SSD media is removed. DARE is implemented by default on new installs of XtremIO version 4 and can be turned on in previous versions. SQL Server TDE is the native SQL Server encryption-at-rest technology for database data and log files. When TDE is implemented on any database on a SQL Server instance, the SQL Server system tempdb database is also encrypted. SQL Server data pages are encrypted and decrypted when they are transferred to and from disk. They remain decrypted in memory, so the more I/O dependent the workload, the greater the impact of TDE on performance. TDE can also impact storage capacity.

XtremIO DARE offers superior performance over TDE, offloads encryption processing to the array, and does not have the physical capacity impact associated with TDE. For more information about XtremIO DARE, refer to the XtremIO Data at Rest Encryption

White Paper.

Note: DARE does not protect encrypted files from OS-level operations such as file copy.

Test methodology

We used the OLTP toolkit to generate an OLTP workload against the 30 production databases in our test environment. The workload pattern was identical to that used in the baseline OLTP test (see Baseline SQL Server OLTP workload).

We used the OLAP toolkit to generate an OLAP workload against the SQL 2012 4 TB DW database.

We implemented TDE on a single OLTP and a single OLAP database.

The XtremIO array is DARE-enabled by default; we used the metrics obtained in previous tests as baselines against which to assess the impact of TDE on performance.

Test procedure

• As an OLTP-only baseline, we used the production workload performance metrics obtained in previous tests.

• We implemented TDE compression using the AES_128 algorithm on one of the OLTP production databases. After confirming that the TDE enablement process was complete, we gathered performance metrics from the production workload for one hour. We interpreted the data obtained to measure the capacity and performance impact.

• As an OLAP-baseline, we used the performance metrics obtained in previous tests.

• We implemented TDE compression using the AES_128 algorithm on the OLAP database and used the same process as we did for OLTP to gather performance metrics. We interpreted the data obtained to ensure measure the capacity and performance impact.

XtremIO DARE and SQL Server TDE comparison

38 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

Test results

Implementing TDE resulted in a reduction in performance of up to 30 percent

compared to XtremIO DARE only, as measured by OLTP TPS and OLAP query duration. There was a clear correlation between the amount of I/O required to process a request and the impact of TDE, as shown in Figure 23. For OLTP, the impact was up to 5 percent; for large OLAP scans, the impact was up to 30 percent.

Figure 23. Impact of TDE on OLTP and OLAP workloads

Microsoft volume maintenance tasks are not available with TDE because the SQL Server data and log files are encrypted. As shown in Figure 24, this increases the space that SQL Server consumes, and has consequences for XtremIO physical capacity.

Considerations for using TDE

• Instant file initialization is not available when TDE is enabled. Furthermore, space savings from features such as array compression and thin provisioning are not available.

• Any copies taken before TDE is implemented continue to be zero optimized (zero disk for pre-allocated space) and array compressed. However, because the data is completely different in the TDE copy, the copy will no longer share any physical data blocks with the source volume.

• New copies taken after encryption benefit from XtremIO deduplication savings, even copies of encrypted databases.

• Consider using a combination of XtremIO DARE and SQL Server (2014 only) backup encryption or EMC Avamar® backup encryption to ensure the database at rest is encrypted at all times.

XtremIO data deduplication and data compression technologies complement each other. Data deduplication reduces physical data by eliminating redundant data blocks; data compression further reduces the data footprint by eliminating data redundancy within the binary level of each data block.

We performed a series of tests to determine the average compression ratio of SQL Server in our environment and the impact of compression on throughput and latency for the production instances.

Copy database data reduction rate

With XtremIO, you can achieve and sustain logical data capacity on the storage array that exceeds, by a large margin, the physical flash capacity in the system. This is possible by using the combination of thin provisioning, compression and

deduplication, and close-to-zero-cost copies (when present).

In this test, we used AppSync to automatically create multiple XVC copies of the production databases, mount the copies to a non-production host, and recover the database copies. We measured the performance impact of these operations and the overall data reduction.

The effective space of the volumes created on XtremIO for the 30 production OLTP databases is 78 TB, as detailed in Table 8. After we created six sets of copies of the production databases, and a single set of 20 x 2 TB copies for the AlwaysOn AG replica—a total of 254 copies—the addressable logical volume capacity increased to 588 TB, as shown Figure 25.

Data reduction analysis

40 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

Figure 25. XtremIO XVC copy data efficiency

This 588 TB is composed of both production volumes and copy volumes. The copy volumes can be accessed for primarily read-intense workloads, operational recovery, and destructive data repurposing such as DevOps.

The initial amount of physical storage required to create copies in an XtremIO array is negligible (less than 1 percent of the parent volume), as shown in the XtremIO XMS GUI in Figure 26.

Figure 26. XtremIO physical capacity shows little change when data is repurposed No. of

virtual copies

Total Physical Capacity (TB)

Initial Physical Capacity Used

(TB)

SQL Server Data (TB)

Addressable Logical Volume Capacity in XtremIO (TB)

0 30.49 15.25 11.5 100.6

39 30.49 15.25 23.0 178.6

78 30.49 15.25 34.5 256.6

117 30.49 15.25 46.0 334.6

156 30.49 15.25 57.5 412.6

195 30.49 15.25 69.0 490.6

234 30.49 15.25 80.5 568.6

Note: In the XtremIO GUI, the data reduction rate achieved by the database volumes refers to production volumes only (not copies), so the ratio remained at 2.0:1 after copy creation. Production database data reduction rate

With data reduction, the XtremIO effective system capacity can expand beyond the physical capacity. In environments that contain data with a significant amount of duplication, the effective logical capacity of XtremIO can be much higher than its physical flash capacity.

The SQL Server production databases do not typically benefit from deduplication. Copies of a database on the same array are deduplicated. The compression ratio observed in the tests ranged from 1.7:1 to 2.0:1, depending on the data in the SQL Server data mix. The average data reduction ratio typically observed in SQL Server environments is around 2.0:1, not including the copy data reduction effect. SQL Server AlwaysOn Availability Groups

In this test, we measured the time to add replica databases to one AlwaysOn AG secondary replica in asynchronous commit mode and to another secondary replica in synchronous commit mode. For both replicas, we created AlwaysOn AG secondary copies of ten 250 GB production databases. On XtremIO, there is an initial close-to-zero-cost space requirement when setting up an AlwaysOn AG secondary replica on the same array as the primary replica.

Provisioning time for AlwaysOn AG database replicas

Provisioning an AlwaysOn AG database replica requires multiple steps. As shown in Figure 27, we performed the following steps on each replica:

1. Perform a full backup of the ten production databases on the primary replica. 2. Provision storage for the databases on the secondary replica.

3. Restore (with no recovery) the databases on the secondary replica. 4. Restore the log files on the secondary replica.

5. Add the databases to the availability group on the secondary replica. For the first of the secondary replicas, we performed all the steps using only the native Microsoft tools. For the second replica, we used AppSync (during a

maintenance window) to protect the databases and mount them to the replica; we then used the native Microsoft tools to restore the logs and add the databases to the secondary replica.

As shown in Figure 27, AppSync can significantly reduce the time to provision databases into an AlwaysOn AG secondary replica. When using AppSync, we

provisioned the databases 10 times faster than when using the native Microsoft tools only. The initial amount of physical storage required for both methods was minimal (about 1 percent of the parent volume.

42 EMC XtremIO Workload Consolidation and Copy Management for Microsoft SQL Server

Figure 27. Time to add 10 databases to an AlwaysOn AG availability group replica

Using AlwaysOn AG secondary replicas and AppSync to create multiple repurposed copies

In this test, we measured the performance impact on the AlwaysOn AG primary production databases when taking first- and second-generation database-consistent copies from the asynchronous secondary replica. We also measured the impact on the AlwaysOn AG send and redo queues for both the asynchronous and synchronous secondary replicas.

With the baseline production OLTP workload running, we reviewed the AlwaysOn Dashboard to ascertain the status of the availability replicas. As shown in Figure 28, all replicas were in the green state.

Figure 28. The AlwaysOn Dashboard

With the baseline production OLTP workload running, we used AppSync to create three sets of database copies from the asynchronous secondary replica. The first set was for first-generation copies; the second set was for first- and second-generation copies; the third set was for a copy refresh.

As shown in Figure 29, taking the copies had no effect on the production OLTP database TPS or on the log send and recovery queues for the synchronous secondary replica. The asynchronous secondary replica’s redo queue immediately recovered from a minor spike3.

3 When using AppSync to perform a full backup, make sure that overall backup and restore

procedures for the database are not affected.