ISSN(Online): 2320-9801 ISSN (Print): 2320-9798

I

nternational

J

ournal of

I

nnovative

R

esearch in

C

omputer

and

C

ommunication

E

ngineering

(A High Impact Factor, Monthly, Peer Reviewed Journal) Website: www.ijircce.com

Vol. 7, Issue 8, August 2019

Auditing of Cloud Data with Aware

Scheduling Algorithm

Dr.T.Sankar

1, Mr.R.Karthikeyan

2Assistant Professor, Department of Computer Science , K.S.R College of Arts & Science, Tamil Nadu, India1

Assistant Professor, Department of Master of Computer Applications, Gnanamani College of Technology,

Tamil Nadu, India2

ABSTRACT: Cloud computing opens a new era in IT as it can provide various elastic and scalable IT services in a pay-as-you-go fashion, where its users can reduce the huge capital investments in their own IT infrastructure. In this philosophy, users of cloud storage services no longer physically maintain direct control over their data, which makes data security one of the major concerns of using cloud. Existing research work already allows data integrity to be verified without possession of the actual data file. When the verification is done by a trusted third party, this verification process is also called data auditing, and this third party is called an auditor. First, a necessary authorization/authentication process is missing between the auditor and cloud service provider, i.e., anyone can challenge the cloud service provider for a proof of integrity of certain file, which potentially puts the quality of the so-called „auditing-as-a-service‟ at risk; Second, although some of the recent work based on BLS signature can already support fully dynamic data updates over fixed-size data blocks, they only support updates with fixed-sized blocks as basic unit, which we call coarse-grained updates. As a result, every small update will cause re-computation and updating of the authenticator for an entire file block, which in turn causes higher storage and communication overheads. Theoretical analysis and experimental results demonstrate that our scheme can offer not only enhanced security and flexibility, but also significantly lower overhead for big data applications with a large number of frequent small updates, such as applications in social media and business transactions.

KEYWORD: Cloud Storage Server(CSS), Cloud Service Provider(CSP), Third Party Auditor(TPA)

I. INTRODUCTION

Cloud computing is a processing worldview, where an enormous pool of frameworks are associated in private or open systems, to give progressively adaptable foundation to application, information and record stockpiling. With the appearance of this innovation, the expense of calculation, application facilitating, content stockpiling and conveyance is decreased fundamentally. Distributed computing is a functional way to deal with experience direct money saving advantages and it can possibly change a server farm from a capital-escalated set up to a variable estimated condition. Cloud processing depends on a basic central of reusability of IT abilities'. The distinction that distributed computing brings contrasted with customary ideas of "network processing", "appropriated figuring", "utility registering", or "autonomic figuring" is to expand skylines crosswise over authoritative limits. Forrester characterizes distributed computing as: "A pool of preoccupied, very versatile, and oversaw register framework equipped for facilitating end-client applications and charged by utilization."

Enterprises be able to prefer to deploy applications on Public, Private or Hybrid clouds. Cloud Integrators preserve participate a vital part in choosing the right cloud path for each party.

Public Cloud

ISSN(Online): 2320-9801 ISSN (Print): 2320-9798

I

nternational

J

ournal of

I

nnovative

R

esearch in

C

omputer

and

C

ommunication

E

ngineering

(A High Impact Factor, Monthly, Peer Reviewed Journal) Website: www.ijircce.com

Vol. 7, Issue 8, August 2019

and accessibility changes. These are overseen and bolstered by the cloud supplier. One of the benefits of a Public cloud is that they might be bigger than a ventures cloud, along these lines giving the capacity to scale consistently, on interest.

Private Cloud

Private cloud are manufactured only for a solitary endeavor. They plan to address worries on information security and offer more noteworthy control, which is regularly ailing in an open cloud. There are two varieties to a private cloud:

• On-premise Private Cloud: On-premise private cloud, otherwise called interior mists are facilitated inside ones possess server data This model gives a progressively institutionalized procedure and insurance, yet is restricted in parts of size and versatility. IT offices would likewise need to bring about the capital and operational expenses for the physical assets. This is most appropriate for applications which require unlimited authority and configurability of the foundation and security.

• Externally hosted Private Cloud: This kind of private cloud is facilitated remotely with a cloud supplier, where the supplier encourages a selective cloud condition with full certification of security. This is most appropriate for ventures that don't incline toward an open cloud because of sharing of physical assets.

Hybrid Cloud

Hybrid brid Clouds consolidate both open and private cloud models. With a Hybrid Cloud, specialist organizations can use outsider Cloud Providers in a full or incomplete way hence expanding the adaptability of processing. The Hybrid cloud condition is equipped for giving on-request, remotely provisioned scale. The capacity to increase a private cloud with the assets of an open cloud can be utilized to deal with any sudden floods in outstanding task at hand.

Figure 1: Deployment Models

1.1 Data Storage Auditing Model

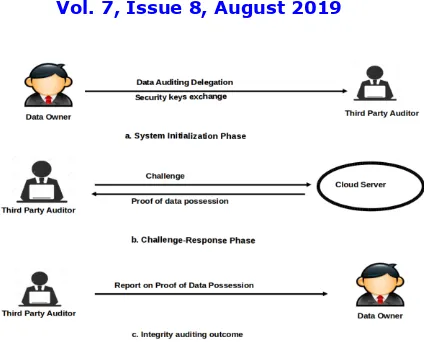

The exhibit that perceives a Third Party Auditor other then information proprietor to affirm information genuineness is named as open checking structure. This framework involves Information proprietor, CSS and a Third assembling analyst. Occupation of Third assembling inspector in open affirming framework is clarified underneath: a. Third Party Auditor (TPA): is a developed individual having resources, and capacities to get to organizations coordinated by dispersed vault server. Information proprietor may demand TPA to check the accomplishment of appropriated storage facility server. The structure framework for open looking at is showed up in Fig. 2. It contains two sorts of correspondences. First correspondence is between data owner and TPA as appeared in Fig. 2.

Private

Hybrid

d

Cloud

ISSN(Online): 2320-9801 ISSN (Print): 2320-9798

I

nternational

J

ournal of

I

nnovative

R

esearch in

C

omputer

and

C

ommunication

E

ngineering

(A High Impact Factor, Monthly, Peer Reviewed Journal) Website: www.ijircce.com

Vol. 7, Issue 8, August 2019

Figure 2: Auditing Framework

The data owners make cryptographic keys, gauges metadata of their data to be accumulated on conveyed server. At that point data owner exchanges the cryptographic keys with the TPA and spares data on cloud and goes disconnected. Second correspondence is in the midst of TPA and CSS as appeared in Fig. 2b. The truthfulness confirmation is done by means of a test reaction checking show. This input confirming show has three stages: Challenge, Authentication, and Verification. Whenever TPA wishes to confirm responsibility for it tosses a test to the far off server. The server gives a proof of ownership of data that is recognized to the TPA. The TPA approves the confirmation for its rightness using the cryptographic keys appropriate and builds up an announcement of responsibility for. This announcement is then passed on to the data owner as appeared in Fig. 2c. This confirmation report from TPA will direct the data owner to assess enrolled cloud specialist organization.

II. RELATED WORK

Contrasted with conventional frameworks, adaptability and flexibility are key points of interest of cloud [1], [2], [7]. In that capacity, proficiency in supporting powerful information is vital. Security and protection assurance on powerful information has been examined broadly in the past [8], [10]. In this paper, we will concentrate on little and regular information refreshes, which is significant on the grounds that these updates exist in many cloud applications, for example, business exchanges and online interpersonal organizations (for example Twitter [12]). Cloud clients may likewise need to part huge datasets into littler datasets and store them in various physical servers for unwavering quality, security saving or effective preparing purposes.

Numerous huge information applications will keep client information put away on the cloud for little estimated however successive updates. A most run of the mill precedent is Twitter, where each tweet is confined to 140 characters in length (which equivalents 140 bytes in ASCII code). They can mean a sum of 12 terabytes of information for each day [3]. Capacity of exchange records in banking or securities markets is a comparative and greater security-overwhelming precedent. In addition, cloud clients may need to part huge scale datasets into littler lumps before transferring to the cloud for security saving [2] or effective planning [4]. In such manner, productivity in preparing little updates is constantly basic in huge information applications.

ISSN(Online): 2320-9801 ISSN (Print): 2320-9798

I

nternational

J

ournal of

I

nnovative

R

esearch in

C

omputer

and

C

ommunication

E

ngineering

(A High Impact Factor, Monthly, Peer Reviewed Journal) Website: www.ijircce.com

Vol. 7, Issue 8, August 2019

III. CHALLENGES IN DATA STORE

3.1 Dynamic auditing

As the redistributed data is dynamic naturally, it is fundamental to develop a confirming show that supports for dynamic tasks on re-appropriated data. Homomorphism authenticators are used in an open confirmation strategy to achieve a consistent transmission overhead.

3.2 Collaborative auditing

Various data earnestness checking shows that are applicable for a solitary cloud situation has been as of late proposed and they don't bolster multi cloud conditions. The present appropriated archive structures bolster new Distributed File Systems (DFS) so as to offer ease and area freedom to owner's data.

3.3 Batch verification

Regardless of the way that the cluster checking can unbelievably improve the ability of confirming, while at the same time illustrating the gathering confirmation convention, it is essential to ponder the information handling multifaceted nature and transmission overhead for group tasks.

3.4 Support for blockless verification

A check scheme without the reception of accreditation names and mark conglomeration instruments relies on the server to send the moved lumps to guarantee the uprightness. The disadvantage of this plan is that there is more transmission overhead at the server and furthermore the viability of check plan is influenced.

3.5 Privacy preserving

At the point when data owner convey data to the far off cloud or agent the check the trustworthy outsider, it is fundamental for them that the verifiers or cloud not be given the opportunity to obtain knowledge of the data content or have the option to make a copy of the essential data.

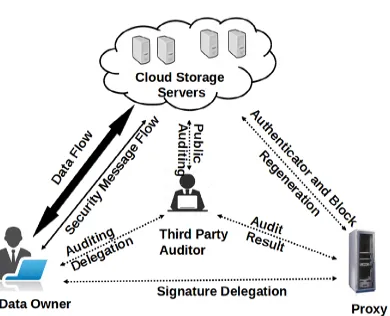

IV. SYSTEM FRAMEWORKS

The reviewing system for Reconstructing-Code-based dispersed storehouse is appeared in Fig.that comprises of four articles: (I) The Data owner, who have a colossal number of data records to be spared in the cloud; (ii) The cloud, gives storage benefits and have important information preparing resources; (iii) The public verifier, has capacity and capability to achieve open confirmation on the coded data in the cloud; (iv) the public

ISSN(Online): 2320-9801 ISSN (Print): 2320-9798

I

nternational

J

ournal of

I

nnovative

R

esearch in

C

omputer

and

C

ommunication

E

ngineering

(A High Impact Factor, Monthly, Peer Reviewed Journal) Website: www.ijircce.com

Vol. 7, Issue 8, August 2019

Figure 4: System Framework

The presented public validate factor for the remaking code-based cloud store. To deal with the remaking issue of failed affirmations in the nonappearance of data owners, a middle person is presented, that is required to fix the confirmations, into the standard open checking model. An open obvious accreditation is structured, which is created by a few keys and can be fixed utilizing incomplete keys. This system can totally release data owners from on-line responsibility. Likewise, the cipher coefficients are randomized with a pseudorandom conduct to preserve data classification.

V. AUDITING OF DYNAMIC DATA

Early schedule calculations were performed in grid however lessens the exhibition by requiring schedule ahead of time of assets. In cloud condition because of adaptability of assets, physically distribute assets to undertaking is unimaginable. Planning ought to be done so that it will use the assets productively and furthermore embrace the adjustments in condition arrangements.

1. The Data Owner will create keying materials by means of KeyGen and FileProc after that he transfer the information to CSS. Not quite the same as past plans here in our plan the customer will store a RMHT as metadata.

2. After that the Data Owner will approve the TPA by sharing an esteem sigAUTH.

3. Verifiable DataUpdating: the CSS plays out the Data Owner‟s fine-grained update demands by means of Perform Update on the information.

4. Client runs Verify Update to check whether CSS has acquire update in and out the reports on both the information blocks and the relating authenticators (utilized for Auditing) sincerely.

5. Challenge, Confirmation and Verification: Describes how the respectability of the information put away on CSS is

checked byTPA by means of GenChallenge, GenProof and Verify.

6. In auditable aware data schedule plan the clustering of different undertakings submitted in an application both from the data owner and auditor are prioritized based on the queries.

ISSN(Online): 2320-9801 ISSN (Print): 2320-9798

I

nternational

J

ournal of

I

nnovative

R

esearch in

C

omputer

and

C

ommunication

E

ngineering

(A High Impact Factor, Monthly, Peer Reviewed Journal) Website: www.ijircce.com

Vol. 7, Issue 8, August 2019

looking at the present hash estimation of the scrambled record with the past hash esteem it discovers whether uprightness lost or not. In the event that both esteem bungles TPA advises the client and on the off chance that the progressions is done from the client site, at that point TPA will disregard this. To discover the hash esteem markele hash tree is utilized.

5.1 Outsourcing Algorithm

n preliminary processing, we adopt the data fragment technique to split a file F as n blocks and split every block as s sectors. For the sake of security, the size of blocks and sectors is limited by the security parameter. Considering simplicity, here we only refer to each individual file block denoted as F[i], while 1 6 i 6 n. The outsourced big data auditing scheme consists of five algorithms, listed as follows.

(1) Setup(1λ): The prime q is determined by the security parameter λ. The DO generates secret keys {k1, k2, k3} randomly from 𝑧𝑞∗, and defines a XOR-homomorphic function f : 𝑧𝑞∗ × 𝑧𝑞∗ → 𝑧𝑞∗. The DO randomly chooses a

primitive element γ in the Galois field and defines an algebraic signature Sγ. The public parameter params are

{q, f, Sγ}, while the secret key is sk = {k1, k2, k3}.

(2) TagBlock(params, sk, F): The DO chooses n random values {Ri}𝒊=𝟏n

(3) from 𝑧𝑞∗, and then represents each block with an information Infoi = {Indi || Vi || TSi}, where Indi denotes the

index, Vi stands for the version with an initialized value (Vi = 1), and TSi means the time stamp. The DO

computes

Ci = fk1⊕k2⊕k3 (F[i] ⊕ Ri),

~Ci = fk1 (Infoi⊕ Ri),

and the corresponding tag for each file block is Ti = Sγ(Ci || Infoi). The DO sends {F[i], Ti , ~Ci}i=1 n

to the CS

and then deletes the local copy of the file F. At same time, the DO sends k2 and {Infoi}i=1 n

to the TTPA and k3

to the CS through a secure channel.

(4) Challenge: The TTPA selects c random values {vi}i=1 c

with c ≤ n, and sends a challenge request chal = {csi ,

vi}i=1 c

to the CS, where csi denotes the challenged block.

(5) Proof(params, F, Ti , chal): When the CS receives the challenge message chal, it will compute µi = ~C csi⊕ fk3 (F[i] ⊕ vi),

σ = 𝑐𝑖=1T csi

and send prf = ({µi} c i=1, σ) to the TTPA as the proof message.

(6) Verification(params, sk, chal, prf): Upon receiving the proof message prf, the TTPA first computes ~C csi = fk2

(Info csi⊕ vi). Then, the TTPA calculates ωi = µi ⊕ Cb csi . Finally, the TTPA verifies the integrity of the

file F as follows: σ ?=

1 𝑐

𝑖=1 (Sγ (ωi)⊕rγSγ(Infoi))

If the above equation holds, it demonstrates that the file is integrated and well stored on CS; otherwise, the data has been damaged.

VI. AUDITABILITY AWARE SCHEDULING

ISSN(Online): 2320-9801 ISSN (Print): 2320-9798

I

nternational

J

ournal of

I

nnovative

R

esearch in

C

omputer

and

C

ommunication

E

ngineering

(A High Impact Factor, Monthly, Peer Reviewed Journal) Website: www.ijircce.com

Vol. 7, Issue 8, August 2019

step increments for the equivalent Priority forms. 3. Higher need procedure has littler holding up time and reaction time. Calculation for planning:

1. for i = 0 to i < main queue-size 2. if priority (task i+1 ) > priority (task i ) 3. then

4. add task i+1 in front of task i in the queue 5. end if

6. end for

in the event that request query originates from TPA to CSP for information reviewing and in the meantime client needs to refresh his information, at that point client will almost certainly update the information first. On the off chance that two TPAs send demand for evaluating a similar record, at that point the request query from first TPA will be served first.

VII. CONCLUSION

Today the case of big data archetype in distributed computing is to store the huge datasets. Significant angle for cloud client is cloud information security and protection. In this paper we have given an execution of approved evaluating and proficient fine grained updates. We have additionally actualized auditability mindful information booking which attempts to use the cloud assets to serve the customers with most extreme throughput. Our proposed framework has three noteworthy segments which give a protected, approved and effective evaluating of information and alteration of information in cloud condition. Security is given by confining produced TPA from inspecting user‟s information without user‟s concern. Effectiveness is accomplished by supporting little updates. This paper executed a need based planning calculation to give legitimate use of cloud assets among the errands coming to CSS. Our proposed work‟s result demonstrates the outcome for content record. We can transfer content document and give fine-grained updates to those record as it is an all around acknowledged arrangement. In future the work will center for various sorts of document. For security reason more layer of verification to TPA will be given.

REFERENCES

1. R.Karthikeyan, & et all ”Biometric for Mobile Security” in the international journal of Engineering Science & Computing, Volume7,Issue6, June 2017, ISSN(0):2361-3361,PP No.:13552-13555.

2. R.Karthikeyan, & et all ”Data Mining on Parallel Database Systems” in the international journal of Engineering Science & Computing, Volume7,Issue7, July 2017, ISSN(0):2361-3361,PP No.:13922-13927.

3. R.Karthikeyan, & et all ”Ant Colony System for Graph Coloring Problem” in the international journal of Engineering Science & Computing, Volume7,Issue7, July 2017, ISSN(0):2361-3361,PP No.:14120-14125.

4. R.Karthikeyan, & et all ”Classification of Peer –To- Peer Architectures and Applications” in the international journal of Engineering Science & Computing, Volume7,Issue8, Aug 2017, ISSN(0):2361-3361,PP No.:14394-14397.

5. R.Karthikeyan, & et all ”Mobile Banking Services” in the international journal of Engineering Science & Computing, Volume7,Issue7, July 2017, ISSN(0):2361-3361,PP No.:14357-14361.

6. R.Karthikeyan, & et all ”Neural Networks for Shortest Path Computation and Routing in Computer Networks” in the international journal of Engineering and Techniques, Volume 3 Issue 4, Aug 2017, ISSN:2395-1303,PP No.:86-91.

7. R.Karthikeyan, & et all ”An Sight into Virtual Techniques Private Networks & IP Tunneling” in the international journal of Engineering and Techniques, Volume 3 Issue 4, Aug 2017, ISSN:2395-1303,PP No.:129-133.

8. R.Karthikeyan, & et all “Routing Approaches in Mobile Ad-hoc Networks” in the International Journal of Research in Engineering Technology, Volume 2 Issue 5, Aug 2017, ISSN:2455-1341, Pg No.:1-7.

9. R.Karthikeyan, & et all “Big data Analytics Using Support Vector Machine Algorithm” in the International Journal of Innovative Research in Computer and Communication Engineering, Volume 6 Issue 9, Aug 2018, ISSN:2320 - 9798, Pg No.:7589 - 7594.

10. R.Karthikeyan, & et all “Defense and Confidentiality Smart Access for High Throughput in Mobile Internet of Things ” in the International Journal of Innovative Research in Computer and Communication Engineering, Volume 6 Issue 9, Aug 2018, ISSN:2320 - 9798, Pg No.:7222 - 7228. 11. R.Karthikeyan, & et all “Data Security of Network Communication Using Distributed Firewall in WSN ” in the International Journal of Innovative Research in Computer and Communication Engineering, Volume 6 Issue 7, July 2018, ISSN:2320 - 9798, Pg No.:6733 - 6737.

ISSN(Online): 2320-9801 ISSN (Print): 2320-9798

I

nternational

J

ournal of

I

nnovative

R

esearch in

C

omputer

and

C

ommunication

E

ngineering

(A High Impact Factor, Monthly, Peer Reviewed Journal) Website: www.ijircce.com

Vol. 7, Issue 8, August 2019

14.R.Karthikeyan &et all “Efficient Methodology and Applications of Dynamic Heterogeneous Grid Computing” in the International Journal of Innovative Research in Computer and Communication Engineering, Volume 7 Issue 2, Feb 2019, ISSN:2320 - 9798, Pg No.:1125 -1128.

15.R.Karthikeyan &et all “Entrepreneurship and Modernization Mechanism in Internet of Things” in the International Journal of Innovative Research in Computer and Communication Engineering, Volume 7 Issue 2, Feb 2019, ISSN:2320 - 9798, Pg No.:887 – 892.