DOI :

https://doi.org/10.32628/CSEIT195116Data Extraction in Malignant Environments

Amith Mandal1, Dr. R. P. Ramkumar2

1M. Tech Scholar, Department of CSE, Malla Reddy Engineering College (A), Telangana, India

2Professor, Department of CSE, Malla Reddy Engineering College (A) Telangana, India

ABSTRACT

Deliberate or inadvertent escape of classified data is undoubtedly one among the premier extreme security dangers that associations look inside the advanced period. The risk right now stretches out to the private lives: an abundance of private information is out there to interpersonal organizations and great telephone providers and is in a roundabout way exchanged to undependable outsider and fourth gathering applications. amid this work, a bland data genealogy structure LIME (Data Lineage in the Malicious Environment), is utilized for data stream over different elements that take two trademark, guideline parts (i.e., proprietor and buyer). It characterizes the exact security ensures required by such a data heredity component toward recognizable proof of a blameworthy substance, and decide the disentangling non-denial and trustworthiness suspicions. At that point create and break down a totally special dependable data exchange convention between two substances among noxious surroundings by expanding upon unmindful exchange, solid watermarking, and mark natives. At long last, an exploratory investigation to exhibit the helpfulness of convention what's more, apply structure to the important data run projections of learning outsourcing and the informal organizations. In general, LIME(Data Lineage in the Malicious Environment),lineage structure for information exchange, to be a key advance towards accomplishing responsibility by plan.

Keywords: Classified Data, LIME, Trademark, Malicious Environment, Micro Benchmarking, Pairing Based Cryptography, GNU

I. INTRODUCTION

Data Extraction avoidance is the type of solutions which can help an institute to apply control for the prevention of unwanted, unintentional or leakage of specific information to illegal entities inside or outside the institute. Here specific information may refer to the institute’s internal processing of documents, tactical business plans, academic property, economic statements, security policy, network architecture, blueprints etc.

extraction much easy as the impostor can now easily transfer the data.

We as a consumer find many apps on internet and we knowingly handle our data to the respective organizations but is our data really safe in the hands of the organization, what if any employee of the fellow organization decides to make a step that ruins the organizations name, what if any employee wantedly access your data and misuses it. The answer to that problem is represented in the following work which will determine the guilty entity with proof and held for explanation if any data extraction occurs. There are few safety mechanisms such associate secret writing of valuable data, however it's a proactive measure that cannot verify the info leak once it happens. Once the intruder decrypts the information then there's no potential live which will prevent the info leak of the personal data and business data by any desired measures. Following are few measures that we often see and there are a number of situations where the event is determined.

1. We should take facebook for instance wherever we can see assortment of outsiders that approaches our insight, if any outsider is experienced in learning spill it winds up impractical to call attention to or see that wherever extremely the data was misused as there square measure a few outsiders advertised.

2. Consultancies have all the valuable data of representatives/applicants caning to seek out work which outsider contracts another outsider then all the three organizations will right now approach the individual information then it may turn out to be horrendously difficult to undeniably relate one the three partnerships for learning spill, in each the circumstances witnesses can either don't target the exact learning safety or the intruder may attentively reveal classified data which cannot be tied to any of the handlers. At times there comes experience the explanatory procedures however it's horribly expensive and it also doesn't create a terribly sensible

outcome .Amid this distribution the prerequisite for general answerability instrument in learning exchange is demonstrated which may specially go with confirmable measures and control the condemn blameworthy. This philosophy of identification is named as learning root. Endeavors are made by numerous associations to utilize learning source inside the type of watermarking or including of false information or data in specially appointed way has not been that flourishing.

II. EXISTING SYSTEM

In the digital era, data outflow during intentional exposures, or un-intentional damage by untruthful staff and harmful external entities, always provide serious threats to organizations. it's not laborious to believe that this is simply the edge of an iceberg, as in most cases information of knowledge outflow go unreported thanks to concern of loss of client confidence or restraining penalties huge amount of digital data can be derived at nearly no price and might be unfold through the net in terribly short time to boot, the risk of obtaining or getting caught for information outflow or the extraction is very low, as there square measure presently nearly no answerableness mechanisms. For these reasons, the matter of information loss has reached a replacement dimension these days.

2.1 Disadvantages

2.2 Proposed System

In this work, the requirement for a general answerability mechanism in information transfers is got wind. This answerability are often directly related to demonstrably detective work a transmission history of information across multiple entities ranging from its origin. This can be called information place of origin, information lineage or supply tracing.

Here the matter is dignified of practically associating the problem to the extraction, and work on the information lineage methodologies to unravel the matter of knowledge leak in numerous leak situations. This system defines LIME, a generic information lineage framework for information flow across multiple entities within the malicious atmosphere.

Here it's observe that entities in information flows assume one amongst 2 roles: owner or shopper and introduce an extra role within the sort of auditor, whose task is to see a problem for any data extraction, and summarize the accurate properties for communication between these roles.In the process Associate in nursing non-mandatory non-repudiation assumption created between two house owners, Associate in nursing and non-mandatory trust (honesty) assumption created by the auditor concerning the house owners.

The second contribution, associate in nursing responsible for information transfer protocol to provably and securely transfer valuable information between the two parties is bestowed. It deals with associate in nursing and untrusted sender, associate in nursing and untrusted receiver situation related to data transfer between any two customers, the protocol we use is an interesting combination of the sturdy watermarking, oblivious transfer, and signature primitives.

2.2.1 Advantages of Proposed System

The key advantage of this representation is that it applies answerableness right from design; i.e., it drives the system designer to contemplate doable information leakages and also the corresponding answerableness constraints at the look stage. This helps to beat the prevailing state of affairs wherever purpose to the information, owner assigns the digital sign and stores it inside the internet and in addition performs the subsequent operations to transfer image with its digital signature with supported title, description

Below are the few operations an Owner can perform 1 .Upload image or a file,

2. Verify image or file details 3. View Consumer requests and 4. Deleted image details

3.2 Web Server

The Web service supplier manages an internet to supply information storage service. And performs the subsequent operations reminiscent of Storing all image files with their signature, read all image Files with its details, view all image comments, and view all information house owners and Users, read all attackers.

A web server will be monitoring the system held responsible for a secure login including analyzing of all the data that has been transferred between two entities

The basic operations that are performed by the web server are as follows

2. View all the files details 3. View the attacked files 4. View Download history 3.3 Auditor

Auditor has capability to access, manage or monitor the transferred knowledge or data beneath the entrustment of information of owner, auditor has experience and capabilities that a standard user doesn't have, for occasionally auditing the outsourced knowledge. This audit service is considerably necessary for digital forensics and credibleness in Web and performs the subsequent operations

1.View image/file details 2. View Attacked files

3. Block or unblock an attacker 4. View messages from a consumer

3.4 Data Consumer

The net user of an Organization encompasses a great quantity of data to be stored in servers and have the permissions to access and manipulate and keep image and its data.

The consumer can search information and accessing the image data if he's approved and performs the subsequent operations

1.View all the files 2. Download a file

3. Send download request 4. Send message to the auditor

IV. TECHNIQUES USED

4.1 Micro Benchmarking

The execution of the convention is done in as a proof-of-idea and to determine its result. For the unaware exchange sub-convention is executed to the convention utilizing the PBC library, which itself makes utilization of the GMP library. For marks BLS plot is executed, likewise utilizing the PBC library. For symmetric encryption a usage of AES from the Crypto++ library is utilized. For watermarking a

usage of the Cox calculation is utilized for vigorous picture and set the negative factor, which decides the quality of the watermark, to a estimation of 0.1.Now execute the explore different avenues regarding diverse parameters to examine the execution. The sender and beneficiary piece of the convention will be executed in a similar program, i.e., don't break down system sending, yet just computational execution.

4.2 PBC (Pairing Based Cryptography)

Blending based cryptography is a generally youthful territory of cryptography that rotates around a certain capacity with exceptional properties.

The PBC (Pairing-Based-Cryptography) library is a free C library based on the GMP library that plays out the numeric activities and fundamental matching based on cryptosystems.

The PBC library is planned to be the foundation of executions of matching based cryptosystems, in this manner speed and portability are key objectives. It provides schedules by comparing it to the elliptic bend era, elliptic bend number juggling and blending calculation. Due to the GMP library, in spite of being composed in C, pairings times are shabby. On a 1GHz Pentium III:

• Fastest matching is 11ms

• Short matching is 31ms

The PBC library can likewise be utilized to construct customary cryptosystems.

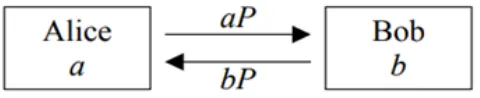

Fig 1. Two party one round key agreement protocol

3.3 The GNU Multiple Precision Arithmatic Library GMP is attentively designed to be fast for small number juggling, in task on marked whole numbers, objective numbers, and gliding point numbers. there's no sensible cutoff to the accuracy aside from those verifiable by the available memory inside the machine GMP keeps running on. GMP highlights a made arrangement of capacities, and furthermore the capacities have a regular interface. The fundamental target applications for GMP are cryptography applications and investigation, web security applications, unadulterated science frameworks, process unadulterated arithmetic examination, and so forth.

GMP is thoroughly intended to be as brisk as feasible, each for little operands and for huge operands. The speed is accomplished by exploitation full words in light of the fact that the fundamental number juggling sort, by exploitation brisk calculations, with to a great degree enhanced gathering code for the principal regular inward circles for loads of CPUs, and by a general weight on speed. The primary GMP unharness was made in 1991. it's consistently created and kept up, with a substitution unharness

concerning once every year. Since variant about six, GMP is appropriated beneath the twin licenses, wildebeest LGPL v3 and wildebeest GPL v2. These licenses make the library freed to utilize, share, and enhance, and allow you to kick the bucket the outcome. The wildebeest licenses offer flexibilities, however conjointly set firm limitations on the use with without non programs. GMP's primary target stages are Unix-type frameworks, reminiscent of GNU/Linux, Solaris, HP-UX, waterproof OS X/Darwin, BSD, AIX, and so on. It is additionally celebrated internationally to figure on Windows in each 32-bit furthermore, 64-bit mode.

3.4 BLS Scheme

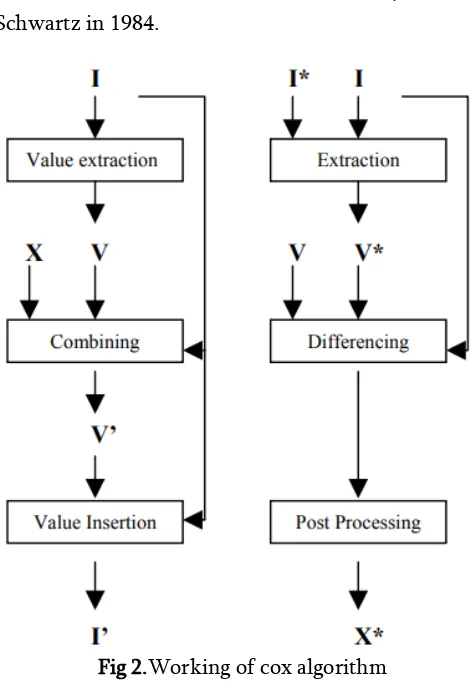

named the "Cox– Zucker machine" by Charles Schwartz in 1984.

Fig 2.Working of cox algorithm

3.6 Possible Data Distortion

Here a simple cacophonic algorithmic program is utilized. Here the picture is spilt into n similarly estimated squares. In any case, once utilized a powerful watermark for the modest components (that will be that the - issue used by the Cox algorithmic program is zero.5), varieties between neighboring components ended up noticeable in spite of the way that the one watermarks zone unit in cognoscible.This outcome turns out to be considerably more grounded when numerous emphases as decided. Now and again, this bending would potentially affect the ease of use of the record. The Cox algorithmic program is utilized with relates alpha issue of zero.1 and no mutilation is noticeable. It would entrance to examine if this drawback are regularly dodged by exploitation extra detailed cacophonic calculations. As most watermarking plans assemble utilization of the nearness of information

inside the archive, this can be not an inconsequential assignment.

V. RESULTS

Fig 3. file for auditor

Fig 4.web server

Fig 6. Attacker being detected and blocked

Fig 2. A message request from a non guilty consumer

VI. CONCLUSION AND FUTURE ENHANCEMENT

We are blessing LIME, a model for capable data exchange over different elements. We tend to plot teaming up parties, their interrelationships and give a solid inner portrayal for a data exchange convention utilizing a novel blend of negligent exchange, strong watermarking and computerized marks. We have a tendency to demonstrate its rightness and show that it's feasible by giving little seat checking comes about. By introducing a general relevant structure, we have a tendency to present answerableness as right on time as inside the style area of a data exchange foundation. Despite the fact that LIME doesn't effectively prevent data release, it presents responsive answerableness. Along these lines, it'll deflect vindictive gatherings from unseaworthy individual reports and can energize legitimate (however reckless) gatherings to

create the predefined assurance for touchy data. LIME is adaptable as we have a tendency to separate between beyond any doubt senders (typically proprietors) and untrusted senders (generally buyers). Inside the instance of the beyond any doubt sender, a dreadfully simple convention with next to no overhead is achievable. The untrusted sender needs a considerable measure of modern convention, however the outcomes don't appear to be bolstered put stock in presumptions furthermore, along these lines they should be prepared to change over an unbiased substance (e.g. a judge). Our work conjointly rouses any investigation on data release recognition procedures for differed report sorts furthermore, projections. To Illustrate, it'll be a propelling future examination heading to style a certain ancestry convention for determined data.

VII.REFERENCES

[1]. "Chronology of data breaches," http://www.pri"acyrights.org/data-breach. [2]. "Data breach cost,"

http://www.symantec.com/about/news/release/ article.jsp?prid=2011030801

[3]. "Pri"acy rights clearinghouse," http://www.pri"acyrights.org.

[4]. "Electronic Pri"acy Information Center (EPIC)," http://epic.org, 1994.

[5]. "Facebook in Pri"acy Breach," http://online.wsj.com/article/SB1000142405270 2304772804575558484075236968.html.

[6]. "Offshore outsourcing," http://www.computerworld.com/s/article/1099 38/Offshore outsourcing cited in Florida data leak.

Processing (IWSSIP 2006). Citeseer, 2006, pp. 53-56.

[8]. P. Papadimitriou and H. Garcia-Molina, "Data leakage detection," Knowledge and Data Engineering, IEEE Transactions on, "ol. 23, no. 1, pp. 51-63, 2011.

[9]. "Pairing-Based Cryptography Library (PBC)," http://crypto.stanford.edu/pbc.

[10]. I. J. Cox, J. Kilian, F. T. Leighton, and T. Shamoon, "Secure spread spectrum watermarking for multimedia," Image Processing, IEEE Transactions on, "ol. 6, no. 12, pp. 1673-1687, 1997.

[11]. B. Pfitzmann and M. Waidner, "Asymmetric fingerprinting for larger collusions," in Proceedings of the 4th ACM conference on Computer and communications security, ser. CCS ’97, 1997, pp. 151-160.

[12]. S. Goldwasser, S. Micali, and R. L. Ri"est, "A digital signature scheme secure against adapti"e chosen-message attacks," SIAM J. Comput., "ol. 17, no. 2, pp. 281-308, 1988.

[13]. A. Adelsbach, S. Katzenbeisser, and A.-R. Sadeghi, "A computational model for watermark robustness," in Information Hiding. Springer, 2007, pp. 145-160.

[14]. J. Kilian, F. T. Leighton, L. R. Matheson, T. G. Shamoon, R. E. Tarjan, and F. Zane, "Resistance of digital watermarks to collusi"e attacks," in IEEE International Symposium on Information Theory, 1998, pp. 271-271.

[15]. M. Naor and B. Pinkas, "Efficient obli"ious transfer protocols," in Proceedings of the Twelfth Annual ACM-SIAM Symposium on Discrete Algorithms, 2001, pp. 448-457

Cite this article as :

Amith Manda, Dr. R. P. Ramkumar, "Data Extraction in Malignant Environments", International Journal of Scientific Research in Computer Science, Engineering and Information Technology (IJSRCSEIT), ISSN : 2456-3307, Volume 5 Issue 1, pp. 123-130, January-February 2019. Available at doi : https://doi.org/10.32628/CSEIT195116