DOI : 10.32628/CSEIT195626

Deep Learning Neural Implementation Research Equation

Soumen Chakraborty

Department of Information Technology, MCKV Institute of Engineering, MAKAUT, West Bengal, India Email : [email protected]

ABSTRACT

Continuous years, Simulated intelligence is grasped in a wide extent of zones where it exhibits its pervasiveness over customary standard based figurings. These strategies are being facilitated in advanced revelation systems with the target of supporting or despite superseding the central component of security examiners. Regardless of the way that the absolute robotization of acknowledgment and examination is an enticing goal, the ampleness of AI in computerized security must be evaluated significant learning is logically dominating in the field of Software. Regardless, many open issues still remain to be investigated. How do researchers consolidate significant learning with the due constancy. We present an examination, directed to security specialists, of AI methodologies associated with the acknowledgment of interference, malware, and spam. The goal is twofold: to assess the present improvement of these courses of action and to perceive their basic obstacles that balance a brief gathering of AI computerized acknowledgment plans.

Keywords : AI, Significant Learning, Advanced Security, Not Well Arranged Learning, Counts, Pseudo Code ,Traffic Identification, Feature Learning, Deep Learning, Protocol Classification.

I. INTRODUCTION

Despite the enabling proportion of papers and scenes, there exists little survey examination on significant learning in SE, e.g., the fundamental strategy to organize significant learning into SE, the SE stages empowered by significant learning, the premiums of SE specialists on significant learning, etc. Understanding these request is noteworthy. From one perspective, it empowers masters and researchers to get a graph appreciation of significant learning in SE. Of course, authorities and experts can become progressively realistic significant learning models according to the examination.

Hence, this examination drives a book list examination on research papers in the field of SE that usage significant learning techniques.

A. Sporadic Protocol Detection

incorporate xj to the covered layer feature hi. At the point when some Wij(1) ascends to zero, that infers xj is purposeless to the further inducing. As shown by the enormity of parameters, the dedication, that is, the centrality of every byte in x can be surveyed. We take the aggregate of each and every altogether weight |Wij(1)| concerning every center point in the data layer as

Where n is the amount of centers in the data layer, for instance the length of payloads in our worry.

Around there we present seven issues that must be considered before picking whether to apply ML computations in NOC and SOC. We can anticipate that, at the present front line, no figuring can be viewed as totally independent with no human supervision. We substantiate each issue through exploratory results from composing or one of a kind preliminaries performed on huge endeavors. We begin by depicting the testing circumstances of our preliminaries, and the estimations considered for appraisal. The investigations base on DGA Detection and Network Intrusion Detection, and impact two ML figurings: Random Forest and Feedforward Fully Connected Deep Neural Network.

For DGA Detection, we make two stamped planning datasets containing both DGA and non-DGA spaces. The past dataset contains DGA made through known techniques, while the last contains DGA made using later systems. Non-DGA spaces are subjectively picked among the Cisco Umbrella top-1 million. We report the significant estimations of the readiness datasets in Table 2. Likewise, we manufacture a testing dataset of 10,000 regions isolated evenhandedly from all of the planning datasets. We furthermore rely upon a veritable and unlabelled dataset made out of appropriate around 20,000 spaces come to by a tremendous affiliation. The features removed for this dataset are: n-gram

Dataset DGA technique DGA count non-DGA check 1 Well- known 21,355 20,227

Well-known and recent 37,673 8,120

streams that raised alerts by the endeavor arrange IDS and examined by a territory ace. Significant estimations of these arrangement datasets are represented in Table 3.

Both are set up with the third dataset portrayed in Table 3 and took a stab at the framework intrusion area testing dataset. To get progressively refined results, we reiterate the planning and trial of these classifiers on different events using different topologies. In Table 4, we exhibit the request results achieved by each methodology; for the FNN we report the results gained by the best topology involving

Accuracy =TN, where TN connotes certified negatives in 1.024 neurons spread transversely more than 4

covered layers. The RF classifier performed better than the FNN, with a F1-score of practically 0.8, against the 0.6 obtained by the FNN. Our takeaway is that security directors should not be charmed by the engaging neuronal multi-layer approach offered by Deep Learning, as a bit of these strategies may even now be adolescent for computerized security.

F1-score Precision Recall 0.7985 0.8727 0.736 0.6085 0.7708 0.5027

B. General versus unequivocal locators

Instance of using significant learning in SE

Significant learning is a procedure that licenses computational models made out of different getting ready layers to learn depictions of data with various elements of reflection [14]. Here, we present an instance of using significant learning in SE. In this point of reference, we apply the significant learning model AutoEncoder on a typical SE task, i.e., bug reports rundown [10].

1. E data social event picks the available data for a SE task. For bug report layout, the commonly available data are bug reports. Each bug report predominantly contains a title, a delineation of the bug, and a couple of comments.

2. E data preprocessing removes the noises in E data. For a bug report, the English stop words and some programming-unequivocal ones are the clatters. Furthermore, incredibly short sentences are also bustles, since they may be uninformative.

3. Model decision and setup select sensible significant learning models for SE data and pick model structures, e.g., the amount of layers and neural units of each layer. The extensively used significant learning models consolidate AutoEncoder, CNN, RNN, etc (explained in Fig. 3). These standard models regularly have a couple of varieties, e.g., LSTM, Bi-LSTM, and thought based RNN are old style varieties of RNN. In this model, AutoEncoder is picked. AutoEncoder generally has a symmetric plan, i.e., the amount of neural units of information and yield layers are the equal. The yield layer is portrayed for instance to repeat the data layer. The amount of neural units of disguised layers lessens as towards the focal point of the framework. In the wake of setting up, the covered states hold the key information for redoing the data layer.

4. Input advancement changes SE data into vectors for significant learning models. For bug report rundown, examiners learn the word repeat in bug reports and change the word repeat regards into vectors. These vectors are seen as an arrangement set for AutoEncoder.

Detection Deep Learning

We inspect the accumulated papers to investigate the status of significant learning in SE.

The normality of significant learning in SE

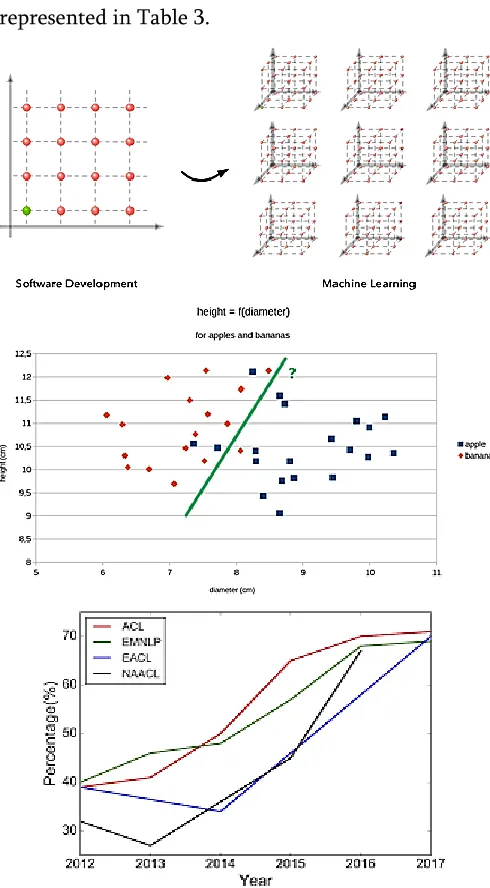

We count the amount of research papers each year and the areas of the dispersions in Fig. 2(a) and Fig. 2(b) independently. In Fig. 2(a), we find that significant learning attracts little thought in SE for a long time, i.e., just shy of 3 papers are appropriated each year before 2015. The reason may be that yet significant learning performs well on picture taking care of, talk affirmation, etc., it puts aside exertion for the specialists and

Top settings of the creations

To wrap up, significant learning is transcendent in SE. It pulls in the thought from both SE and AI social order.

C. The way to deal with arrange significant learning into SE

As the regularity of significant learning in SE, we analyze the best way to deal with consolidate significant learning into SE. Fig. 3 exhibits the name of significant learning models and the amount of papers using these models. We find that most examinations (55 papers) direct move standard significant learning models into SE, including AutoEncoder, CNN, DBN, RNN and an essential totally related DNN. Meanwhile, the old style varieties of these models in AI are in like manner for the most part used (28 papers, for instance, SDAEs, LSTM, etc. The above models are used in 84.7% research papers. Other than using a lone model, solidified significant learning

D. Concerns to use significant learning in SE

practicability of significant getting the hang of, including the ampleness, profitability, understandability, and testability. These issues may affect the improvement of significant learning in SE.

Sufficiency and Efficiency. Late examinations exhibit that by applying a direct streamlining specialist Differential Evolution to change SVM, it achieves tantamount results with significant learning on interfacing the data unit in Stack Overflow [7]. Specifically, this procedure is on various occasions faster than planning significant learning models.

To close, the practicability of significant learning is so far a rising and intriguing issue for SE specialists and researchers.

II.CONCLUSION

Significant adjusting starting late accept a huge activity for enlightening SE endeavors. In this examination, we direct a book reference examination on the status of significant learning. A comparative wonder is moreover observed on code proposition, in which a changing n-gram language model unequivocally expected for programming beats RNN and LSTM [6]. Regardless of the way that techniques like disengaged getting ready and circulated processing may to some degree settle the efficiency issue [10], there is up 'til now a tradeoff between significant learning and other lightweight, space express models. Such tradeoff drives a significant examination on significant learning, e.g., what sorts of SE data and endeavors are proper for significant learning and how to arrange the territory data into significant learning.

III.REFERENCES

[1]. Madala, K., Gaither, D., Nielsen, R., and Do, H. Computerized ID of segment state progress model components from necessities. Global Requirements Engineering Conference Workshops. 2017. (pp.386-392).

[2]. Joulin, An., and Mikolov, T. Gathering algorithmic examples with stack-increased intermittent nets. NIPS'15. (pp. 190-198). [3]. Tong, H., Liu, B., and Wang, S. Programming

imperfection expectation utilizing stacked denoising autoencoders and two-organize outfit learning. IST'17.

[4]. Pang, Y., Xue, X., and Wang, H. Anticipating helpless programming segments through profound neural system. Universal Conference on Deep Learning Technologies. 2017. (pp.6-10). [5]. Dahl, G. E., Stokes, J. W., Deng, L., and Yu, D. Enormous scale malware order utilizing arbitrary projections and neural systems. Worldwide Conference on Acoustics, Speech and Signal Processing. 2013. (pp. 3422-3426). [6]. Hellendoorn, V. J., and Devanbu, P. Are

profound neural systems the best decision for displaying source code? FSE'17. (pp.763-773). [7]. Fu, W., and Menzies, T. Simple over hard: a

contextual investigation on profound learning. FSE'17. (pp.49-60).

[8]. Kahng, M., Andrews, P.Y., Kalro, An., and Chau, D.H.P. ActiVis: visual investigation of industry-scale profound neural system models. IEEE Transactions on Visualization and Computer Graphics, 24(1), 2018. (pp.88-97).

[9]. Tian, Y., Pei, K., Jana, S., Ray, B. DeepTest: robotized testing of profound neural- organize driven independent vehicles. ICSE'18.

[10]. Li, X., Jiang, H., Liu, D., Ren, Z., and Li, G. Unsupervised profound bug report synopsis. ICPC'18.

[11]. Munassar, N.M.A., and Govardhan, A. A correlation between five models of programming designing. Global Journal of Computer Science Issues, 5, 2010. (pp.95-101). [12]. Dwivedi, A. K., Tirkey, A., Ray, R. B., and Rath,

[13]. Huang, X., Ho, D., Ren, J., and Capretz, L.F. Improving the COCOMO model utilizing a neuro-fluffy methodology. Connected Soft Computing, 7(1), 2007. (pp.29-40).

[14]. Bengio, Y., LeCun, Y., Hinton, G. Profound Learning. Nature. 2015, 521. (pp.436- 444).

Cite this article as :

Soumen Chakraborty, "Deep Learning Neural Implementation Research Equation", International Journal of Scientific Research in Computer Science, Engineering and Information Technology (IJSRCSEIT), ISSN : 2456-3307, Volume 5 Issue 6, pp. 294-300, November-December 2019.