Available Online At www.ijpret.com

INTERNATIONAL JOURNAL OF PURE AND

APPLIED RESEARCH IN ENGINEERING AND

TECHNOLOGY

A PATH FOR HORIZING YOUR INNOVATIVE WORKMOUSE NAVIGATION CONTROL USING HAND GESTURE WITH NEURAL

NETWORK

VAISHALI M. GULHANE, MADHAVI S. JOSHI

1. M. E. Student, Digital Electronics, PRMIT & R Badnera, Amravati, Maharashtra, India. 2. Professor, Electronics Department, PRMIT & R Badnera, Amravati, Maharashtra, India.

Accepted Date:

27/02/2013

Publish Date:

01/04/2013

Keywords

Mouse replacement,

American Sign Language,

Neural Network,

Gesture modeling,

Image Processing

Corresponding Author Ms. Vaishali M. Gulhane

Abstract

Hand gesture recognition system can be used for interfacing

between computer and human using hand gesture. This work

presents a technique for a human computer interface through

hand gesture recognition that is able to recognize some static

gestures from the American Sign Language hand alphabet or

numbers. The objective of this research is to develop a feed

forward algorithm for recognition of hand gestures. The use of

hand gestures provides an attractive alternative to interface

devices for human-computer interaction (HCI). The ability to

interact with computers in more natural and intuitive ways.

The hand can be used to communicate much more information

by itself compared to computer mice, joysticks, etc. allowing a

greater number of possibilities for computer interaction. This

research produced a working prototype of a robust hand

tracking and gesture recognition system that can be used in

Available Online At www.ijpret.com INTRODUCTION

Computer is used by many people either at

their work or in their spare-time. Special

input and output devices have been

designed over the years with the purpose of

easing the communication between

computers and humans, the two most

known are the keyboard and mouse. Every

new device can be seen as an attempt to

make the computer more intelligent and

making humans able to perform more

complicated communication with the

computer. This has been possible due to the

result oriented efforts made by computer

professionals for creating successful human

computer interfaces [1]. Thus in recent

years the field of computer vision has

devoted considerable research effort to the

detection and recognition of faces and hand

gestures. Hand gestures are an appealing

way to interact with such systems as they

are already a natural part of how we

communicate, and they don’t require the

user to hold or physically manipulate

special hardware [2]. Recognizing gestures

is a complex task which involves many

aspects such as motion modeling, motion

analysis, pattern recognition and machine

learning, even psycholinguistic studies [3]

generally; gestures can be classified into

static gestures and dynamic gestures. Static

gestures are usually described in terms of

hand shapes, and dynamic gestures are

generally described according to hand

movements [4].The gesture recognition

system we have proposed is a step toward

developing a more sophisticated

recognition system to enable such varied

uses as menu-driven interaction,

augmented reality, or even recognition of

Sign Language. Freeman and Roth [5]

introduced a method to recognize hand

gestures, based on pattern

recognitiontechnique developed by

McConnell employing histograms of local

orientation. Naidoo and Glaser[6]

developed a system that is recognized static

hand gesture against complex backgrounds

based on South African Sign Triesch and

Malsburg[7] employed the Elastic-Graph

Matching technique to classify hand

postures against complex backgrounds. Just

proposed to apply to the hand posture

classification and recognition tasks an

approach that has been successfully used

Available Online At www.ijpret.com

on the local non-paramedic pixel operator.

The idea is to make computers understand

human language and develop a user

friendly human computer interfaces (HCI).

Making a computer understand speech,

facial expressions and human gestures are

some steps towards it. The project aims to

determine human gestures by creating an

HCI. An overview of gesture recognition

system is given to gain knowledge.

MOUSE REPLACEMENT

This paper brings out an innovative idea

called as mouse for handless human (MHH)

to use the camera as an alternative to the

mouse. The mouse operations are

controlled by the hand gesture captured by

the camera Gesture Recognition

Technology for Games Poised for

Breakthrough on the website of Venture

Bet (http://venturebeat.com)[9].In the near

future, for example, users will likely be able

to control objects on the screen with empty

hands, as shown in Figure 1.

If the hand signals fell in a predetermined

set, and the camera views a close-up of the

hand [10], we may use an

example-basedapproach, combined with a simple

method top analyze hand signals called

orientation histograms [11].

Figure 1: Controlling mouse with Gesture

The user shows the system one or more

examples of a specific hand shape. The

computer forms and stores the

corresponding orientation histograms. In

the run phase, the computer compares the

orientation histogram of the current image

with each of the stored templates and

selects the category of the closest match, or

interpolates between templates, as

appropriate. This method should be robust

against small differences in the size of the

hand but probably would be sensitive to

changes in hand orientation.

AMERICANSIGN LANGUAGE

American Sign Language is the language of

choice for most deaf people in the United

States. It is part of the “deaf culture” and

includes its own system of puns, inside

Available Online At www.ijpret.com

sign languages of the world [12]. As an

English speaker would have trouble

understanding someone speaking Japanese,

a speaker of ASL would have trouble

understanding the Sign Language of

Sweden. ASL also has its own grammar that

is different from English. ASL consists of

approximately 6000 gestures of common

words with finger spelling used to

communicate obscure words or proper

nouns. Finger spelling uses one hand and 26

gestures to communicate the 26 letters of

the alphabet. Another interesting

characteristic that will be ignored by this

project is the ability that ASL offers to

describe a person, place or thing and then

point to a place in space to temporarily

store for later reference. ASL uses facial

expressions to distinguish between

statements, questions and directives. The

eyebrows are raised for a question, held

normal for a statement, and furrowed for a

directive. This would be feasible in a full

real-time ASL dictionary. Some of the signs

can be seen in figure 2below.

Figure 2: ASL examples

Full ASL recognition systems (words,

phrases) incorporate data gloves. Takashi

and Kishinodiscuss a Data glove-based

system that could recognize 34 of the 46

Japanesegestures (user dependent) using a

joint angle and hand orientation coding

technique. A separate test was created

from five iterations of the alphabet by the

user, with each gesture well separated in

time. While these systems are technically

interesting, they suffer from a lack of

training.

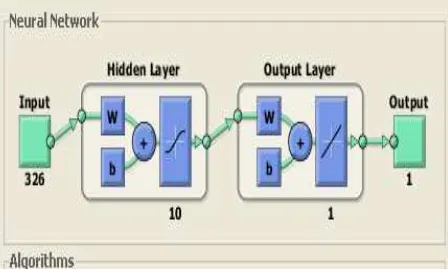

ARTIFICIALNEURAL NETWORK

An Artificial neural network is an

information processing system that has

certain performance characteristics in

common with biological neural networks.

Artificial neural networks have been

developed as generalizations of

Available Online At www.ijpret.com

or neural biology, based on the assumptions

that [13]:

1. Information processing occurs at many

simple elements called neurons.

2. Signals are passed between neurons over

connection links.

Each neuron applies an activation

function (usually nonlinear) to its net input

[14]. Figure 3 shows a simple artificial

neuron. Neural network has been used in

various fields such as identification speech,

recognition vision, classification & control

system. Medical field, Breast cancer cell

analysis, EEG and ECG analysis.

Figure 3: A simple Artificial Neuron

Today neural networks can be trained to

solve problems that are difficult for

conventional computers or human beings.

The supervised training methods are

commonly used, but other networks can be

obtained from unsupervised

trainingtechniques or from direct design

methods.

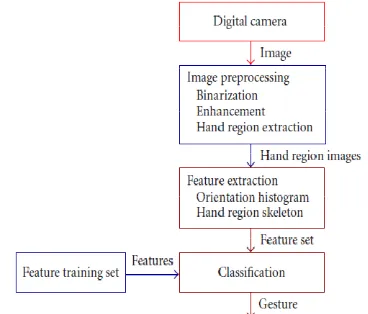

PROPOSED SYSTEM

In this paper, a new approach for the static

hand gesture recognition is proposed. The

presented system is based on one powerful

hand feature in combination [15].

The hand gesture area is separated from

the background by using the well-known

segmentation method of skin color that

used in face recognition , then a contour of

hand image is used as a feature that

describe the hand shape. As such, the

general process of the proposed method is

composed of three main parts:-

1.A preprocessing stepto focus on the

gesture.

2. A feature extraction stepthat use the

hand contour of the gesture image, it is

based on an algorithm proposed by Joshi,

and Sivaswamy [16]. The hand contour will

Available Online At www.ijpret.com 3. A classification stepthe unknown

gesture's feature will be produced and

entered to the neural network.

Figure 4: Block diagram of the recognition

system

The gesture recognition process diagram is

illustrated in figure 4, the hand region

obtained after the preprocessing stage and

it will be used as the primary input data for

the feature extraction step of the gesture

recognition algorithm.

FEATURE EXTRACTION

The feature extraction aspect of image

analysis seeks to identify inherent

characteristics, these characteristics are

used to describe the object, or attribute of

the object. Feature extraction operates on

two-dimensional image arraysbut produces

a listof descriptions, or a ' feature vector

[17]. For posture recognition, (static hand

gestures) features such as fingertips, finger

directions and hand’s contours can be

extracted. Features are thus selected

implicitly and automatically by the

recognizer [18]. Following fig. 5 shows

feature extraction of image analysis. In this

paper we select the hand contour as a good

feature to describe the hand gesture shape.

Figure 5: The feature extraction aspect of

image analysis

EXPECTED RESULT

Many attempts to recognize static hand

gestures shapes from images [19] achieved

fairly good results, but this is mainly due to

either very high computation or the use of

specialized devices. The aim of this system

is to achieve relatively good results but at

the same time a trade off must be

considered between time and accuracy.

However we will aim to achieve very good

Available Online At www.ijpret.com CONCLUSIONS

In this paper we propose a method of

classifying static hand gestures using hand

image contour where the only features are

that of low-level computation. This method

robust against similar static gestures. The

major goal of this research is to develop a

system that will aid in the interaction

between human and computer through the

use of hand gestures as a control

commands.

REFERENCES

1. R. Sharma, and T. Huang S. " Visual

Interpretation of Hand Gestures Computer

Interaction for Human Computer

Interaction: A Review", IEEE Transactions on

pattern analysis and machine inteligence,

vol. 19, No.7, 1997

2. J. MacLean, J. R. Herpers, "Fast Hand

Gesture Recognition for Real-Time

Teleconferencing Applications". 1999.

3. Y. Wu, and T. Huang, "Vision Based

Gesture Recognition: A review",

International Gesture Work shop, GW99,

France. 1999.

4. Chang, J. Chen, W. Tai, and C. Han, “New

Approach for Static Gesture Recognition",

Journal of Information Science and

Engineering22, 1047-1057, 2006.

5. W. Freeman, and M. Roth, "Orientation

Histograms for Hand Gesture Recognition",

Technical Report 94-03a, Mitsubishi Electric

Research, Laboratories Cambridge Research

Center, 1994.

6. S. Naidoo, C. Omlin and M. Glaser,

"Vision-Based Static Hand Gesture

Recognition Using Support Vector

Machines", 1998.

7. J. Driesch, and C. von der Malsburg,

"Robust classification of hand postures

against complex backgrounds", Proc.

Second International Conference on

Automatic Face and Gesture Recognition,

8. Killington, VT, 1996.Just," Two-Handed

Gestures for Human-Computer Interaction",

PhD thesis, 2006.

9. (http://venturebeat.com/). Gallaudet

University Press (2004). 1,000 Signs of life:

basic ASL for everyday conversation.

Gallaudet University Press

10.[Bauer &Hienz, 2000] Relevant feature

Available Online At www.ijpret.com

recognition. Department of Technical

Computer Science, Aachen University of

Technology, Aachen, Germany, 2000.

11.William T. Freeman, Michael Roth,

“Orientation Histograms for Hand Gesture

Recognition” IEEE Intl. Wkshp. On

Automatic Face and Gesture Recognition,

Zurich, June, 1995

12.[Starner, Weaver &Pentland, 1998]

Real-time American Sign Language

recognition using a desk- and wearable

computer-based video. In proceedings IEEE

transactions on Pattern Analysis and

Machine Intelligence, 1998, pages

1371-1375.

13.L. Fausett, "Fundamentals of Neural

Networks, Architectures, Algorithms, and

Applications", Prentice-Hall, Inc. 1994,

pp-304-315.

14.K.Murakami, H.Taguchi: Gesture

Recognition using Recurrent Neural

Networks. In CHI ’91 Conference

Proceedings (pp. 237-242). ACM. 1991.

15.S. Ahmad. A usable real-time 3D hand

tracker. In AsilomarConference on Signals,

Systems and Computers, pages 1257-1261,

Paci¯c Grove, CA, 1994.

16.G. Joshi, and J. Sivaswamy, "A simple

scheme for contour detection", 2004.

17.Hans-JeorgBullinger (1998).

Human-ComputerInteraction.www.engadget.com.

18.G. Awcock, and R. Thomas, "Applied

Image Processing", 1995, pp-148 Acmillan

press LTD, London.

19.Vladimir I. Pavlovic, Rajeev Sharma,

Thomas S Huang, “Visual Interpretation of

Hand Gestures for Human-Computer

Interaction: A review” IEEE Transactions of

pattern analysis and machine intelligence,